The AI Ethics Brief #170: How the US and China Are Reshaping AI Geopolitics

When deregulation meets multilateralism, privacy fails, and 'AI for Good' censors dissent.

Welcome to The AI Ethics Brief, a bi-weekly publication by the Montreal AI Ethics Institute. We publish every other Tuesday at 10 AM ET. Follow MAIEI on Bluesky and LinkedIn.

📌 Editor’s Note

In this Edition (TL;DR)

We examine the near-simultaneous release of competing AI governance visions, the US AI Action Plan and China's Global AI Governance Action Plan, and explore what this superpower bifurcation means for the rest of the world.

From this 30,000-foot view, we take a concrete look at what the US AI Action Plan means in practice through environmental concerns and state rights, showing how it treats local state autonomy as a barrier rather than a cornerstone of American federalism.

We explore privacy failures through the lens of thousands of ChatGPT conversations that became publicly searchable on Google, exposing deeply personal mental health discussions before the feature was removed, revealing the gap between user expectations and platform design.

We share two contrasting perspectives on the UN AI for Good Summit 2025, the troubling censorship of keynote speaker Dr. Abeba Birhane, alongside the concrete policy developments highlighted in our AI Policy Corner with GRAIL at Purdue University.

Finally, we conclude our four-part AVID blog series on red teaming as critical thinking, introducing military-derived techniques like premortems and the Five Whys to help organizations identify AI system vulnerabilities through systematic analysis.

What connects these stories: The persistent gap between stated intentions and actual practice in AI governance, whether in international cooperation, environmental protection, user privacy, or ethical discourse, revealing how institutional priorities consistently override genuine accountability.

Brief #170 Banner Image Credit: Pink Office by Jamillah Knowles & Digit, featured in Better Images of AI, licensed under CC-BY 4.0.

🔎 One Question We’re Pondering:

When the world's two AI superpowers unveil competing visions within days of each other, are we witnessing strategic coordination or dangerous fragmentation in global AI governance?

On July 23, 2025, the Trump administration released Winning the Race: America’s AI Action Plan, the culmination of Executive Order 14179 signed on January 23, 2025. The Action Plan outlines over 90 Federal policy actions across three pillars: Accelerating Innovation, Building American AI Infrastructure, and Leading in International AI Diplomacy and Security. It was accompanied by three Executive Orders addressing “Woke AI” prevention in the federal government, accelerating data center permitting, and promoting AI technology exports.

Three days later, on July 26, 2025, China unveiled its Global AI Governance Action Plan at the World Artificial Intelligence Conference in Shanghai. The Governance Plan proposes a 13-point roadmap for multilateral cooperation and UN-anchored standards, part of Premier Li Qiang’s call for a “global AI cooperation organization” headquartered in Shanghai. Building on President Xi Jinping's 2023 Global AI Governance Initiative, the Governance Plan reflects China's ambition to shape global AI governance through infrastructure development, open-source collaboration, and international cooperation.

Taken together, these near-simultaneous releases signal a clear bifurcation:

U.S. Deregulation-First: The Action Plan explicitly dismantles Biden-era AI safeguards, removing references to bias, climate impact, and diversity, and insists federal agencies procure only “truth-seeking” and “ideologically neutral” systems. As Tech Policy Press warns us in two must-read articles on this topic, this is deregulation framed as innovation, privileging speed and scale over democratic safeguards, while also having the potential to create real harms from politicized AI design. Potential pitfalls include biased hiring algorithms, opaque public-sector AI tools for policing, inequitable health-care and education models, and the sidelining of climate-informed AI research.

Related: In Brief #168, we examined how AI-powered immigration enforcement has expanded predictive policing and surveillance capabilities, eroding civil liberties protections in the process.

China’s Multilateralism: Beijing’s Governance Plan positions China as the champion of trustworthy AI. It leverages development aid, standards harmonization, and UN forums to build soft power and set global norms, while simultaneously cultivating “homegrown alternatives” to Western AI stacks. Most notable is the Model-Chip Ecosystem Innovation Alliance, announced July 28 at the conclusion of the World Artificial Intelligence Conference in Shanghai, which unites Chinese LLM developers and domestic AI chip manufacturers, reducing reliance on foreign technology and bolstering China’s standard-setting influence.

Global Implications: The View from Canada and Beyond

For Canada and other middle powers, this bifurcation presents both opportunities and risks. The US Action Plan’s emphasis on allied cooperation through full-stack American AI export packages suggests potential benefits for countries that align with American AI infrastructure. However, the administration's rejection of international AI safety frameworks could leave allies exposed to regulatory arbitrage and a race to the bottom in AI standards.

Canada's own AI governance approach, emphasizing human rights, transparency, and multilateral cooperation, sits between these two approaches. The question becomes: can smaller nations maintain sovereign AI governance standards when superpowers are racing to the bottom on safety while competing for technological dominance?

The Missing Middle Ground

What's most concerning is the risk of regulatory capture in AI governance. As discussed in the April 2025 edition of the Harvard Law Review, regulatory capture occurs when agencies created to act in the public interest instead advance commercial or political concerns of special interest groups. Both plans appear designed to serve corporate interests through different mechanisms, while genuine public interest considerations are relegated to secondary status.

The rapid succession of these announcements suggests we're entering a new phase of AI geopolitics where governance frameworks become tools of strategic competition rather than collaborative efforts to manage shared risks. For the international community, this raises fundamental questions about whether effective AI governance is possible in an increasingly multipolar world where technological sovereignty overshadows cooperative safety measures.

Looking Forward

As AI systems become more powerful and pervasive, the stakes of this governance competition extend far beyond national competitiveness. Climate models, medical diagnoses, financial systems, and democratic processes all depend on AI systems whose development is now explicitly caught between competing ideological and geopolitical frameworks.

The next few months will reveal whether this represents a temporary divergence or a permanent fracture in global AI governance. For Canada and other nations seeking to chart an independent course, the challenge is maintaining a commitment to evidence-based, rights-respecting AI governance while navigating pressure to choose sides in the technological competition between Washington DC and Beijing.

Recommended Reading: What to make of the Trump administration’s AI Action Plan (Brookings)

Please share your thoughts with the MAIEI community:

Are we witnessing the beginning of parallel AI ecosystems, or can international cooperation on AI governance survive this moment of strategic competition?

🚨 Here’s Our Take on What Happened Recently

Environmental Concerns and State Rights Under Trump’s AI Action Plan

President Trump’s aim to “do everything possible to expedite construction of all major AI infrastructure projects” includes sacrificing national land and environmental protections, as revealed in the AI Action Plan. Notably, the Action Plan provides policy recommendations such as exempting data centers from National Environmental Policy Act (NEPA) provisions, constructing data centers on federal lands, accelerating environmental permitting, and considering Clean Water Act Section 404 permits to allow data centers to discharge dredged or fill materials into water sources. Moreover, the plan seeks to “consider a state’s AI regulatory climate when making funding decisions,” and decrease funding for states with heightened regulations.

📌 MAIEI’s Take and Why It Matters:

Such actions depict a growing “win at all costs” mentality in the US-China tech arms race, rolling back Biden-era climate policies and exposing federal lands to environmental degradation, as the Trump administration works to bypass NEPA protections. Data centers, which house the physical infrastructure for AI tools, are known to have a significant environmental impact, especially on water availability and the electricity grid. While the state is facing severe droughts, Texan data centers are utilizing millions of gallons of water, with 463 million gallons used between 2023 and 2024 alone.

Furthermore, as MAIEI reported in Brief #164, data centers are undermining energy stability in New Zealand and Spain. Although the AI Action Plan seeks to “Develop a grid to match the pace of AI innovation,” new projects to expand the grid often first require comprehensive multi-year impact studies. The electricity grid is strained already; new pressures cannot be added while the current infrastructure is insufficient.

In Brief #167, we argued that communities should exercise their autonomy to push back against the many problematic aspects of data center development projects. States like South Carolina have done precisely this, increasing electricity costs for data centers and other significant energy consumers. The state is also considering capping data center tax incentives to protect residents from spiking electricity costs. Despite these developments, the second-largest investment in South Carolina’s history, a $2.8 billion data center in Spartanburg County, was announced this spring. South Carolina’s example shows that community-driven pushback can safeguard resident interests without deterring headline-making investments.

Yet, Trump’s AI Action Plan will decrease funding to states with environmental and data center regulations deemed incompatible with rapid development, a chilling declaration in the wake of the One Big Beautiful Bill Act provision, which sought a 10-year moratorium on state AI regulation (the Senate ultimately removed this provision). Alongside the increased demand for locally destructive and state resource-taxing data centers, federal respect for state autonomy is dwindling.

By penalizing states for exercising stronger environmental or zoning rules, the AI Action Plan flips the usual respect for states’ rights on its head, treating local autonomy as a barrier rather than a cornerstone of American federalism.

ChatGPT Privacy Breach: When Shared Conversations Become Google Search Results

In late July 2025, thousands of ChatGPT conversations began appearing in Google search results after users clicked the platform's "Share" feature. Nearly 4,500 conversations became publicly indexed, including discussions about deeply personal details like addiction struggles, physical abuse experiences, and severe mental health issues.

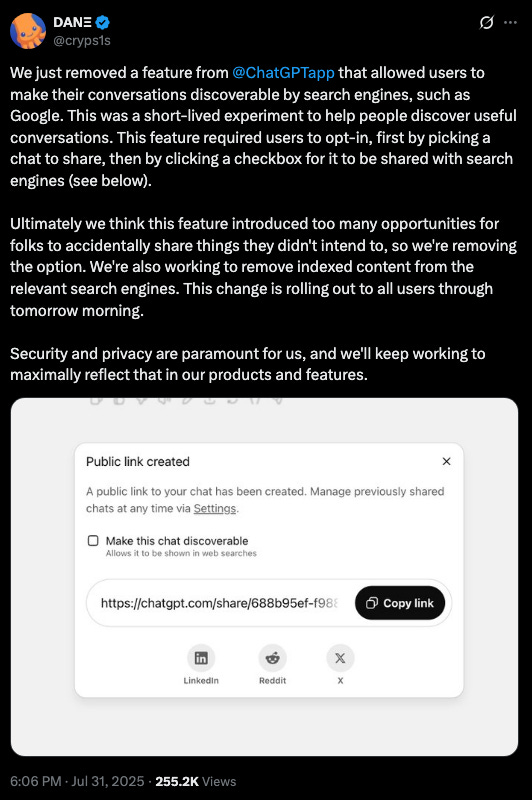

The mechanism was straightforward: when users clicked "Share" to send conversations to friends or save URLs, ChatGPT created publicly accessible links that weren't protected from Google's indexing. Many users appeared unaware that their conversations would become publicly searchable, presumably thinking they were sharing with a small audience.

OpenAI's Chief Information Security Officer, Dane Stuckey, quickly announced the removal of the feature after widespread criticism, describing it as "a short-lived experiment to help people discover useful conversations." However, OpenAI maintains that users have to explicitly select an option to make chats visible in web searches.

The timing is particularly concerning given that nearly half of Americans in a survey from earlier this year say they’ve used large language models for psychological support in the past year, with 75% seeking help with anxiety and nearly 60% for depression.

📌 MAIEI’s Take and Why It Matters:

This incident exposes a fundamental misalignment between how users understand AI privacy and how these systems actually work. While OpenAI claims users had to explicitly select search visibility, the real issue is the cognitive gap between user intent and platform design.

When someone shares a ChatGPT conversation, their mental model involves targeted sharing, like forwarding a text message. The leap from “sharing with a friend” to “making globally searchable” represents a design failure that prioritizes data exposure over user expectations. As privacy scholar Carissa Veliz noted about the incident:

“As a privacy scholar, I’m very aware that that data is not private, but of course, ‘not private’ can mean many things, and that Google is logging in these extremely sensitive conversations is just astonishing.”

This follows a troubling pattern where convenience features mask significant privacy trade-offs. Similar issues emerged with Meta's AI systems, which began sharing user queries in public feeds. As cybersecurity analyst Rachel Tobac observed:

“Many users aren't fully grasping that these platforms have features that could unintentionally leak their most private questions, stories, and fears.”

This incident represents more than individual privacy violations. It signals the normalization of what journalist and writer Isabelle Castro describes as our data becoming “the digital gossip of our time,” where corporations and governments collect personal information and are “poised to use something that is ours for their gain,” just as the gossip industry did in Warren and Brandeis's era.

When AI conversations about mental health, trauma, and personal struggles become searchable public records, it exposes the most intimate aspects of users' lives without their informed consent. Both the OpenAI and Meta AI incidents reveal a fundamental breakdown in the expectation that personal conversations, even with AI systems, maintain some boundary between private reflection and public exposure.

OpenAI CEO Sam Altman recently warned that user conversations with ChatGPT could be subpoenaed and used in court, noting, “If someone confides their most personal issues to ChatGPT, and that ends up in legal proceedings, we could be compelled to hand that over.” These incidents demonstrate why privacy must be designed into AI systems from the ground up, rather than addressed after public outcry.

Building trustworthy AI requires fundamentally rethinking how we architect these platforms to respect user mental models of privacy and provide meaningful control over personal data. As we integrate AI into intimate aspects of our lives, robust privacy protections become essential for maintaining the trust these systems need to function effectively.

Behind the 'AI for Good' Brand: When Industry Summits Censor Dissent

While the UN's AI for Good Global Summit 2025, held from July 8 to 11 in Geneva, brought together over 11,000 participants to discuss AI governance and launched several new initiatives, a troubling incident of censorship revealed the gap between the summit's stated mission and its actual treatment of critical voices. Dr. Abeba Birhane, a leading AI ethics researcher, was reportedly pressured by conference organizers at the International Telecommunication Union (ITU) to remove critical content from her keynote speech.

According to a blog post published on the Artificial Intelligence Accountability Lab (AIAL) at Trinity College Dublin, Dr. Birhane was forced to take down slides and remove anything that mentioned “Gaza” or “Palestine” or “Israel,” and editing the word “genocide” to “war crimes,” until a single slide that called for “No AI for War Crimes” remained.

The controversy intensified when organizers allegedly gave her an ultimatum just two hours before her scheduled talk. Despite the pressure, Dr. Birhane proceeded with a heavily modified version of her presentation, later publishing both the original and censored versions of her slides to highlight the extent of the censorship at what's supposed to be a “social good” event.

The general global trend towards authoritarianism, censorship and silencing of academics, journalists alike that stand up for fundamental rights, the rule of law, and justice is difficult to deal with in the current climate. But for a Summit that supposedly claims that “AI for Good remains firmly aligned with the collective priorities of the international community" and “[…] it is our responsibility to ensure that no one is left behind”, to then censor an invited keynote speaker that advocates for confronting difficult issues and engaging in self-reflection, is doubly disheartening.

📌 MAIEI’s Take and Why It Matters:

This incident exemplifies classic ethics washing, where organizations use “social good” branding like “AI for Good” to appear ethically motivated while simultaneously suppressing actual ethical critique. The irony is stark: a summit called “AI for Good” censoring a speaker who wanted to address how AI technologies are being used to cause harm.

As we covered in Brief #159, where we highlighted Seher Shafiq’s recap of RightsCon 2025, AI systems are being weaponized in conflicts worldwide. In Gaza, AI tools are being used with dubious targeting criteria, permitting up to 15-20 civilian deaths per "target," enabled by tech giants like Google and Amazon through Project Nimbus. Similar patterns have emerged with AI-driven persecution of Uyghurs and Facebook's AI-enabled role in actively promoting violence against Rohingyas.

This follows a familiar playbook across corporate social responsibility initiatives:

Tech Industry Examples:

Google's AI Ethics Board (2019) disbanded after only one week due to controversies surrounding the company’s selection of members.

Google's firing of AI ethics researchers Dr. Timnit Gebru (in December 2020) and Dr. Margaret Mitchell (February 2021).

Facebook's Oversight Board was criticized as providing legitimacy while avoiding real accountability

Broader Corporate Ethics Washing:

Greenwashing at climate summits while continuing harmful practices

Diversity initiatives focused on optics rather than systemic change

Corporate social responsibility programs that deflect from core business harm

The pattern reveals how “social good” or “ethics” becomes branding rather than genuine accountability. Organizations create ethics initiatives that provide legitimacy, control narratives, deflect criticism, and suppress dissent when real concerns threaten business interests.

As Asma Derja of the Ethical AI Alliance put it bluntly: “You cannot claim to lead conversations on AI for Good while censoring ethical critique.”

Did we miss anything? Let us know in the comments below.

💭 Insights & Perspectives:

AI Policy Corner: AI for Good Summit 2025

This edition of our AI Policy Corner, produced in partnership with the Governance and Responsible AI Lab (GRAIL) at Purdue University, examines conference proceedings from the UN AI for Good Global Summit 2025. While our story on Behind the 'AI for Good' Brand: When Industry Summits Censor Dissent highlights concerning censorship issues at the summit, our GRAIL partner Alexander Wilhelm attended the event and provides insight into the concrete policy developments that emerged, including the launch of an AI Standards Exchange Database covering over 700 standards, publication of the UN Activities on AI Report identifying 729 AI projects across 53 UN entities, and UNICC's new AI Hub for training UN employees. These initiatives reflect the summit's stated multistakeholder approach, even as questions remain about whose voices are truly welcomed in these “AI for good” conversations.

To dive deeper, read the full article here.

Red Teaming is a Critical Thinking Exercise: Part 4

In the final installment of the AVID blog series, the authors bridge strategic and tactical red teaming by introducing critical thinking tools from military doctrine, specifically, premortems and the Five Whys technique, to identify organizational vulnerabilities before they manifest.

Drawing from the U.S. Army's Red Team Handbook, they demonstrate how premortems can help teams anticipate AI system failures by assuming failure from the outset and working backward to identify potential causes, while the Five Whys helps uncover the root purposes and risks of AI initiatives through systematic questioning across different organizational perspectives. The piece emphasizes that tactical-level red teaming should be driven by concerns uncovered through strategic analysis, using technical tools like Garak, PyRIT, and Counterfit to investigate specific risks rather than conducting unfocused adversarial testing.

Ultimately, the authors conclude that effective red teaming requires cross-organizational participation and should function as a comprehensive critical thinking exercise that strengthens AI systems through systematic analysis rather than mere technical vulnerability hunting.

Parts 1, 2 and 3 can be found here.

To dive deeper, read the full article here.

❤️ Support Our Work

Please help us keep The AI Ethics Brief free and accessible for everyone by becoming a paid subscriber on Substack or making a donation at montrealethics.ai/donate. Your support sustains our mission of democratizing AI ethics literacy and honours Abhishek Gupta’s legacy.

For corporate partnerships or larger contributions, please contact us at support@montrealethics.ai

✅ Take Action:

Have an article, research paper, or news item we should feature? Leave us a comment below — we’d love to hear from you!

Hi, sharing a survey to go the Minister of AI, both for participation, and maybe your group could do one too? Collective action? Collective consultation? I had my say. I would like to help you in anyway I can, like spreading the word, art, social media shares, digital sovereignty for Canada=our collective survival https://action.openmedia.org/page/175662/survey/1

Please see my publications https://substack.com/@g0s1