AI Ethics #16: Ubuntu ethics, chief data ethics officers, deepfakes for corporate training, social biases in NLP as barriers for people with disabilities and more ...

Green lighting ML, social work thinking for UX and AI design, algorithms keeping students out of universities, baking ethics into AI design, and more from the world of AI Ethics!

Welcome to the sixteenth edition of our weekly newsletter that will help you navigate the fast changing world of AI Ethics! Every week we dive into research papers that caught our eye, sharing a summary of those with you and presenting our thoughts on how it links with other work in the research landscape. We also share brief thoughts on interesting articles and developments in the field. More about us on: https://montrealethics.ai/about/

If someone has forwarded this to you and you want to get one delivered to you every week, you can subscribe to receive this newsletter by clicking below:

Summary of the content this week:

In research summaries this week, we take a look at Ubuntu as an ethical and human rights framework for AI governance, how principles alone cannot guarantee ethical AI, social work thinking for UX and AI design, overcoming barriers to cross-cultural cooperation in AI ethics and governance, and bias in recruiting algorithms.

In article summaries this week, we take a look if the business world is ready for a Chief Data Ethics Officer, an update on AI and responsible innovation work from Google, the benefits of approaching ethics in AI by baking it in rather than sprinkling it on, an algorithm that is keeping IB students out of college, the use of deepfakes in creating corporate training videos, and how asking an AI system to explain itself might lead to more problems than not.

MAIEI Community Initiatives:

Our learning communities and the Co-Create program continue to receive an overwhelming response! Thank you everyone!

We operate on the open learning concept where we have a collaborative syllabus on each of the focus areas and meet every two weeks to learn from our peers. You can fill out this form to receive an invite!

MAIEI Living Dictionary project:

The Living Dictionary was designed by the Montreal AI Ethics Institute to inspire and empower you to engage more deeply in the field of AI Ethics. With technical computer science and social science terms explained in plain language, the Living Dictionary aims to make the field of AI ethics more accessible, no prior knowledge necessary! We hope that the Living Dictionary will encourage you to join us in shaping the trajectory of ethical, safe and inclusive AI development.

Stay tuned for this one — we’ll have more to share next week!

MAIEI Serendipity Space:

Starting on July 23, 2020 we are launching a space for unstructured, free-wheeling conversations with the MAIEI Staff and AI Ethics community at large to talk about whatever is on your mind in terms of building responsible AI systems. We will be talking about research, news, academia, industry, policy, and anything else that you have on your mind. This is a place for human connection in distanced times - we look forward to having you join us!

This will be a 30 minutes session from 12:15 pm ET to 12:45 pm ET so bring your lunch (or tea/coffee)! Register here to get started!

MAIEI at ICML 2020:

ICML is one of the premier machine learning conferences in the world and MAIEI is happy to share that some of our research work has been selected for presentation at workshops there:

Our researchers Abhishek Gupta and Erick Galinkin presented their work on Green Lighting ML: Confidentiality, Integrity, and Availability of Machine Learning Systems in Deployment at the ICML 2020 workshop on Deploying and Monitoring Machine Learning Systems.

Our researchers Abhishek Gupta and Camylle Lanteigne along with Sara Kingsley from Carnegie Mellon University presented their work on SECure: A Social and Environmental Certificate for AI Systems at the ICML 2020 workshop on Deploying and Monitoring Machine Learning Systems.

State of AI Ethics June 2020 report:

We released our State of AI Ethics June 2020 report which captured the most impactful and meaningful research and development from across the world and compiled them into a single source. This is meant to serve as a quick reference and as a Marauder’s Map to help you navigate the field that is evolving and changing so rapidly. If you find it useful and know others who can benefit from a handy reference to help them navigate the changes in the field, please feel free to share this with them!

Research:

Let's look at some highlights of research papers that caught our attention at MAIEI:

From Rationality to Relationality: Ubuntu as an Ethical & Human Rights Framework for Artificial Intelligence Governance by Sabelo Mhlambi

The paper aims to shift the centred belief that a person is a person through being rational. Instead, Mhlambi presents the Ubuntu framework to argue that it is actually how a person endeavours to emphasise the relationality between humans that marks them as a person. Personhood is no longer presented with the benchmark of the quality of rationality, but rather represented by the state of relationality. Mhlambi develops this through his historical account of how rationality became the locus of personhood in Western thought, and then demonstrates how this goes against the very essence of the Ubuntu framework. Problems such as data coloniality and surveillance capitalism are explored, linking to AI’s problems concerning marginalised communities and how they are represented. These are wrapped up in his 5 critiques of AI, which she suggests are tackled by the realisation of Ubuntu. He then concludes with what an Ubuntu internet would look like, and wrapping up a piece full of facts, realisations, and food for thought.

To delve deeper, read our full summary here.

Principles alone cannot guarantee ethical AI by Brent Mittelstadt

AI Ethics has been approached from a principled angle since the dawn of the practice, drawing great inspiration from the 4 basic ethical principles of the medical ethics field. However, this paper advocates how AI Ethics cannot be tackled in the same principled way as the medical ethics profession. The paper bases this argument on 4 different aspects of the medical ethics field that its AI counterpart lacks, moving from the misalignment of values in the field, to a lack of established history to fall back on, to accountability and more. The paper then concludes by offering some ways forward for the AI Ethics field, emphasizing on how ethics is a process, and not a destination. Translating the lofty principles into actionable conventions will help realize the true challenges that face AI Ethics, rather than treating it as something that is to be “solved”.

To delve deeper, read our full summary here.

Social Work Thinking for UX and AI Design by Desmond Upton Patton

What if tech companies dedicated as much energy and resources to hiring a Chief Social Work Officer as they did technical AI talent (e.g. engineers, computer scientists, etc.)? If that was the case, argues Desmond Upton Patton (associate professor of social work, sociology, and data science at Columbia University, and director of SAFElab), they would more often ask: Who should be in the room when considering “why or if AI should be created or integrated into society?”

By integrating “social work thinking” into their process of developing AI systems and ethos, these companies would be better equipped to anticipate how technological solutions would impact various communities. To genuinely and effectively pursue “AI for good,” there are significant questions that need to be asked and contradictions that need to be examined, which social workers are generally trained to do. For example, Google recently hired individuals experiencing homelessness on a temporary basis to help collect facial scans to diversity Google’s dataset for developing facial recognition systems. Although on the surface this was touted as an act of “AI for good,” the company didn’t leverage their AI systems to actually help end homelessness. Instead, these efforts were for the sole purpose of creating AI systems for “capitalist gain.” It’s likely this contradiction would have been noticed and addressed if social work thinking was integrated from the very beginning.

To delve deeper, read our full summary here.

Overcoming Barriers to Cross-Cultural Cooperation in AI Ethics and Governance by Seán S. ÓhÉigeartaigh, Jess Whittlestone, Yang Liu, Yi Zeng and Zhe Liu

As AI development continues to expand rapidly across the globe, reaping its full potential and benefits will require international cooperation in the areas of AI ethics and governance. Cross-cultural cooperation can help ensure that positive advances and expertise in one part of the globe are shared with the rest and that no region is disproportionately negatively impacted by the development of AI. At present, there a series of barriers that limit the capacity states to conduct of cross-cultural cooperation, ranging from the challenges of coordination to cultural mistrust. For the authors, misunderstandings and mistrust between cultures is often more of a barrier to cross-cultural cooperation rather than fundamental differences in ethical principles. Other barriers include language, a lack of physical proximity, and immigration restrictions which hamper on possibilities for collaboration. The authors argue that despite these barriers, it is still possible for states to reach consensus on principals and standards for certain areas of AI.

To delve deeper, read our full summary here.

From our learning communities:

Research that we covered in the learning communities at MAIEI this week, summarized for ease of reading:

Legal Risks of Adversarial Machine Learning Research by Ram Shankar Siva Kumar, Jonathon Penney, Bruce Schneier, Kendra Albert

Adversarial attacks have existed for years now. There are currently no structured legal framework to deal with adversarial attacks including terms of service for ML use or the regulatory framework for ML environment/ model themselves.

The paper analyses the confusing landscapes ahead for legal practitioners and the risks that are there for ML researchers in the current environment.

To drive this point, the researchers reflect upon a specific regulation (Computer Fraud and Abuse Act) and a specific clause that defines the offence as intentional access without authorization or exceeding authorized access; obtaining any information from a protected computer; intentionally causing damage and by knowingly transmitting a program, information, code or command.

The paper also reflects on how the courts are divided between a broad interpretation (exceeding authorized access itself is an offence) and narrow interpretation (exceeding authorized access for improper purpose).

The researchers also classify the type of attacks into exploratory attacks, poisoning attacks, attack on ML environment and attack on software dependencies. The researchers conclude that in their view the Supreme Court is expected to take a narrow view and mention that the narrow view may encourage ML security researchers to pursue their efforts towards such exploits for a better robustness of the ML environments/ models.

To delve deeper, read our full summary here.

Articles:

Let’s look at highlights of some recent articles that we found interesting at MAIEI:

Is The Business World Ready For A Chief Data Ethics Officer? (Forbes)

The article highlights some of primary concerns that an organization might face as it utilizes data to make inferences about their customers or users. Going into details about how privacy risks abound in the use of proxy data from which private details like sexual orientation or political preferences might be inferred, the article highlights how there is a need for data ethics officers who can help address or mitigate some of these concerns. Most people are familiar with how such inferred details can be used to subvert the integrity of democratic institutions by manipulating people and persuading them for political gains. What is essential here is to understand how such consequences can be mitigated through the appointment of someone who would have a fiduciary responsibility to data subjects, something that could potentially be folded into this role.

A position like this would empower the organization to enact top-down change where there is a central authority that is responsible for organization-wide practices to ensure the ethical use of data. Discussions around the bias in these systems have been hotly debated and the opacity surrounding the operations of these systems only exacerbates the problem. A Chief Data Ethics Officer can potentially help to align the existing business practices with these responsible data principles which would be crucial for adoption and implementation rather than just the discussion of this.

Finally, in a highly competitive space, this can become a strong differentiator for an organization in terms of minimizing reputational risk, enhancing employee retention and recruitment, and increasing compliance with regulation. The important thing would be to empower this role in a way to lead to successful, measurable outcomes rather than just paying lip-service and checking off a box.

An update on our work on AI and responsible innovation (Google Blog)

This post penned by Jeff Dean from Google highlights some of the work that they have done in putting responsible AI principles in practice within their organization. It serves as a useful guide for others who are aiming to do the same. One of the initiatives that caught our eye was the deployment of a mandatory technology ethics course trialed with several employees. It is akin to secure coding practices that new hires have to undertake before they are allowed to write a single line of code. It is essential in creating a groundswell of interest and adoption across the organization.

Though they highlight some of the work done internally in operationalizing ethics, the deployment rates appear to be quite low, which means there is still a lot of work to be done. They provide some details on their internal review process: it is an iterative approach that factors in internal domain expertise. When relevant, they have also chosen to engage external experts to understand the societal impacts of their solutions. Illustrating their learning from previous work, they mention how Google TTS and Google Lens have incorporated feedback to make these technologies more ethical. As an example, for Google TTS, they added additional layers of protection to prevent misuse by bad actors. They also limited open-sourcing the TTS solution to prohibit the creation of deepfakes.

The final idea that was quite exciting for us at MAIEI was the use of community and civil-society based bodies to consult on ethical AI implications. They used insights from the discussion with this body to shape their operational efforts and decision-making frameworks. Adopting a transparent approach in how responsible AI principles are being developed and deployed will be crucial in evoking a high degree of trust from users.

Not Just The Sprinkles On Top: Baking Ethics Into AI Design (Forbes)

An interesting article that rehashes notions from the field of cybersecurity and presents them in a new light (unfortunately, it doesn’t make that attribution). Both the efficacy and cost of mitigation measures are better in the earlier stages of development. Our founder Abhishek Gupta has referenced this principle in many of his talks of “baking in, rather than bolting on” principle to applying ethics to AI. The use of interdisciplinary teams has the potential to improve current processes. Specifically, it allows unearthing blindspots and making the entire pipeline of design, development, and deployment more ethical.

There is also a need to think of the guidelines as being tailored for different use cases. For example, Roombas evoke different reactions compared to those from Alexa. An application should convey the right signals to match the expectations and sensibilities of the users.

Another interesting consideration is to think about the high-impact scenarios that have the potential to impact human lives. For example, self-driving vehicles should be able to provide explanations as do medical systems in how they arrive at particular decisions. To reach ubiquitous deployment, we need to evoke a high degree of trust from consumers. The onus to make that lies on the shoulders of the organizations developing these systems.

Meet the Secret Algorithm That's Keeping Students Out of College (Wired)

The IB board adopted an algorithmic approach to providing scores to students because of the ongoing pandemic disrupting in-person exams. As we have discussed many times in past editions of this newsletter, such solutions are not free of potentially harmful consequences. Students, parents, and educators alike are questioning the underlying mechanisms of this algorithmic system. It has become a hotly debated issue in popular media because of the life-altering consequences that this has for the students in the current cohort.

The article points to cases where students who had received conditional admission offers and scholarships have seen those rescinded because of inadequate performance in the IB evaluation.

One of the issues pointed out is how for schools where there was limited enrolment, the model would have flaws because it utilizes information from other schools to infer the results. The IB foundation defended the system by pointing out how it crafts a bespoke equation for each school. But, for schools that don't have much of a track record, this can be a red flag where scores are calculated differently for different students. For small classes, since there are fewer data points, the system is bound to generate noisier results. Ultimately, when operating automated systems that don't have adequate transparency, the burden for existence should fall on the shoulders of the organization.

Deepfakes Are Becoming the Hot New Corporate Training Tool (Wired)

As covered in this newsletter, deepfakes are finding new, positive use cases. This article points to how they are being used to create corporate training videos. In the current pandemic where filming in-person is not possible, the use of such tools offers a unique opportunity to leverage this technology. The agencies providing AI-enabled solutions point to the benefits that such generated content has in terms of providing more diversity in both the voices and appearances of the avatars in the videos.

Typically for small, resource-constrained organizations, they would be limited in their visual story-telling capabilities when they don't have access to large creative agencies. But, the use of such services levels the playing field and allows smaller organizations to compete in the marketing arena with bespoke visuals. Another benefit of utilizing generated digital avatars is that they enable seamless translation into multiple languages allowing locally-tailored content.

The avatars utilize the likeness of real people. These people are compensated based on the amount of footage used in creating these videos. One of the CEOs interviewed also pointed to the benefit of widening beauty standards as the videos will allow for more diversity in the avatars compared to real actors. The one caveat that researchers emphasized is how such efforts might lull companies into a false sense of accomplishment in achieving diversity while creating little, real change on the ground.

Why asking an AI to explain itself can make things worse (MIT Tech Review)

Of late, there have been a lot of calls to have explainable AI systems. This article goes into detail on why that might be problematic. One of the reasons to ask for explanations is so that people have the chance to understand why a system made a decision and be empowered to agree or disagree with it. The article talks about glassbox models that are simplified versions of neural networks that enable easy tracking of how data gets used and how decisions get made. But, for more complicated problems where simplistic approaches don't work well, there is a need to rely on more complex models. There is still the potential to use glassbox models such that they get used initially to identify problems with the datasets followed by a more complex model used on top of that. Visualizations are also known to aid in explainability.

But, the researchers point out pitfalls with this demand for explainability. Specifically, they emphasize how humans tend to overtrust the system when provided explanations leading to the token human problem. As an example, the researchers talk about visualizations and how the people they surveyed didn't even understand what the visuals indicated. A phrase commonly used to describe this behavior dubbed "mathwashing" is where humans trust machines because of their numerical nature. One of the goals for these explanations is to provoke critical reflection, and not to elicit blind trust from humans. Tailoring the explanation to the audiences will also enhance their utility.

From the archives:

Here’s an article from our blogs that we think is worth another look:

Social Biases in NLP Models as Barriers for Persons with Disabilities by Hutchinson et al.

When studying for biases in NLP models, there is not enough of a focus on the impacts that phrases related to disabilities has on the more popular models and how it skews and biases downstream tasks especially when using popular models like BERT and using tools like Jigsaw to do toxicity analysis of phrases. This paper presents an analysis of how toxicity changes based on the use of recommended vs. non-recommended phrases when talking about disabilities and how results are impacted when using them in downstream contexts such as when writers are nudged to use certain phraseology that moves them away from expressing themselves fully reducing their dignity and autonomy. It also looks at the impacts that this has in online content moderation whereby there is a disproportionate impact on the communities because of the heavy bias in censoring content that has these phrases even when they might be used in constructive contexts such as communities discussing the conditions and engaging with other hate speech to debunk myths. Given that more and more content moderation is being turned over to automated tools, this has the potential to suppress the representation of people with disabilities in online fora where they discuss using such phrases thus also skewing the social attitudes and perception of the prevalence of these conditions as being less prevalent than they actually are. The authors point to a World Bank study that mentions that approximately 1 billion people around the world have some form of disability.

They also look at the biases that are captured in the BERT model where there is a negative association between the recommended phrases for disability and associations with things like homelessness, gun violence, and other socially negative terms leads to a slant that impacts and shapes the representations of these words that are captured in the models. Since such models are used widely in many downstream tasks, the impacts are amplified and present themselves in unexpected ways. The authors finally make some recommendations on how to counter some of these problems by involving communities more directly and learning how to be more representative and inclusive. Making disclosures about the places where the models are appropriate to use, where they shouldn’t be used, and the underlying datasets that were used to train the system can also help people make more informed decisions about when to use and when not to use these systems so that they don’t perpetuate harm on their users.

To delve deeper, read the full article here.

Guest contributions:

Why was your job application rejected: Bias in Recruitment Algorithms? (Part 2)

Building on part 1 from last week, this post continues the discussion of bias in recruitment algorithms. Following is an excerpt from the article:

So let’s assume your application was one of the ones ranked high in the matching and sourcing platform, and the recruiter clicked your name to process you in to the next stage where you are screened against the company’s preferred criteria. Whether it is through hard-coded questions and filters built into the system, or machine learning algorithms which make decisions, the screening process helps to reduce the number of applications as it goes through your CV / resume and picks up the skills and information (degree, GPA, years of experience, fluency in spoken or technical languages, etc). Whatever the software was able to read (or parse) from your CV, the data points are then matched with the desired points for the specific role. The candidates who have matching points may then again be ranked according to the degree or percentage of match. However, the bigger bias issues in this stage have to do with data out of which the algorithm was created and what kind of a model makes the predictions.

To delve deeper, read the full article here.

If you’ve got an informed opinion on the impact of AI on society, consider writing a guest post for our community — just send your pitch to support@montrealethics.ai. You can pitch us an idea before you write, or a completed draft.

Events:

As a part of our public competence building efforts, we host events frequently spanning different subjects as it relates to building responsible AI systems, you can see a complete list here: https://montrealethics.ai/meetup

AI Ethics: Ontario Government Alpha Principles on AI

July 22, 11:45 AM - 1:15 PM ET (Online)

AI Ethics: UNESCO AI Ethics Public Consultation

July 29, 11:45 AM - 1:15 PM ET (Online)

You can find all the details on the event page, please make sure to register as we have limited spots (because of the online hosting solution).

From elsewhere on the web:

Things from our network and more that we found interesting and worth your time.

Announcing nominees for the second annual Women in AI Awards by VentureBeat

We are very proud of our staff AI Ethics Researcher Tania De Gasperis for being nominated in the AI Research category and former Sr. Associate Mirka Snyder Caron for being nominated in the Responsibility and Ethics of AI category!!

A huge congratulations to all the fantastic women on this list for pushing frontiers in the domain of AI, we look to them for leadership and inspiration.

Call to our community for help!

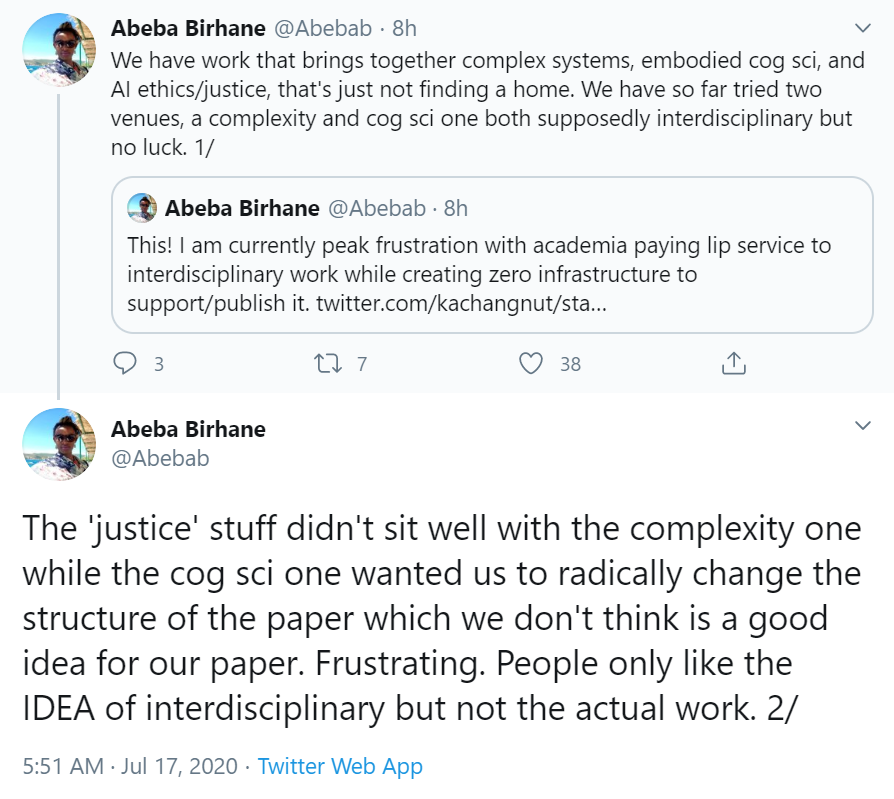

Abeba Birhane is a highly respected scholar doing some fantastic work and we wanted to share this ask from her with the community in helping them find an appropriate venue to publish their work that sits on the intersection of AI ethics/justice, embodied cognitive science, and complex systems.

Please feel free to reach out to Abeba Birhane (abeba.birhane@ucdconnect.ie) and help her find an appropriate work venue for their work.

Signing off for this week, we look forward to it again in a week! If you enjoyed this and know someone else that can benefit from this newsletter, please share it with them!

If you have feedback for this newsletter or think there is an interesting piece of research, development or event that we missed, please feel free to email us at support@montrealethics.ai