The AI Ethics Brief #161: Creator Content & Data Sovereignty

Exploring how AI systems, creator platforms, and data governance quietly reshape power and accountability.

Welcome to The AI Ethics Brief, a bi-weekly publication by the Montreal AI Ethics Institute. Stay informed on the evolving world of AI ethics with key research, insightful reporting, and thoughtful commentary. Learn more at montrealethics.ai/about.

Follow MAIEI on Bluesky and LinkedIn.

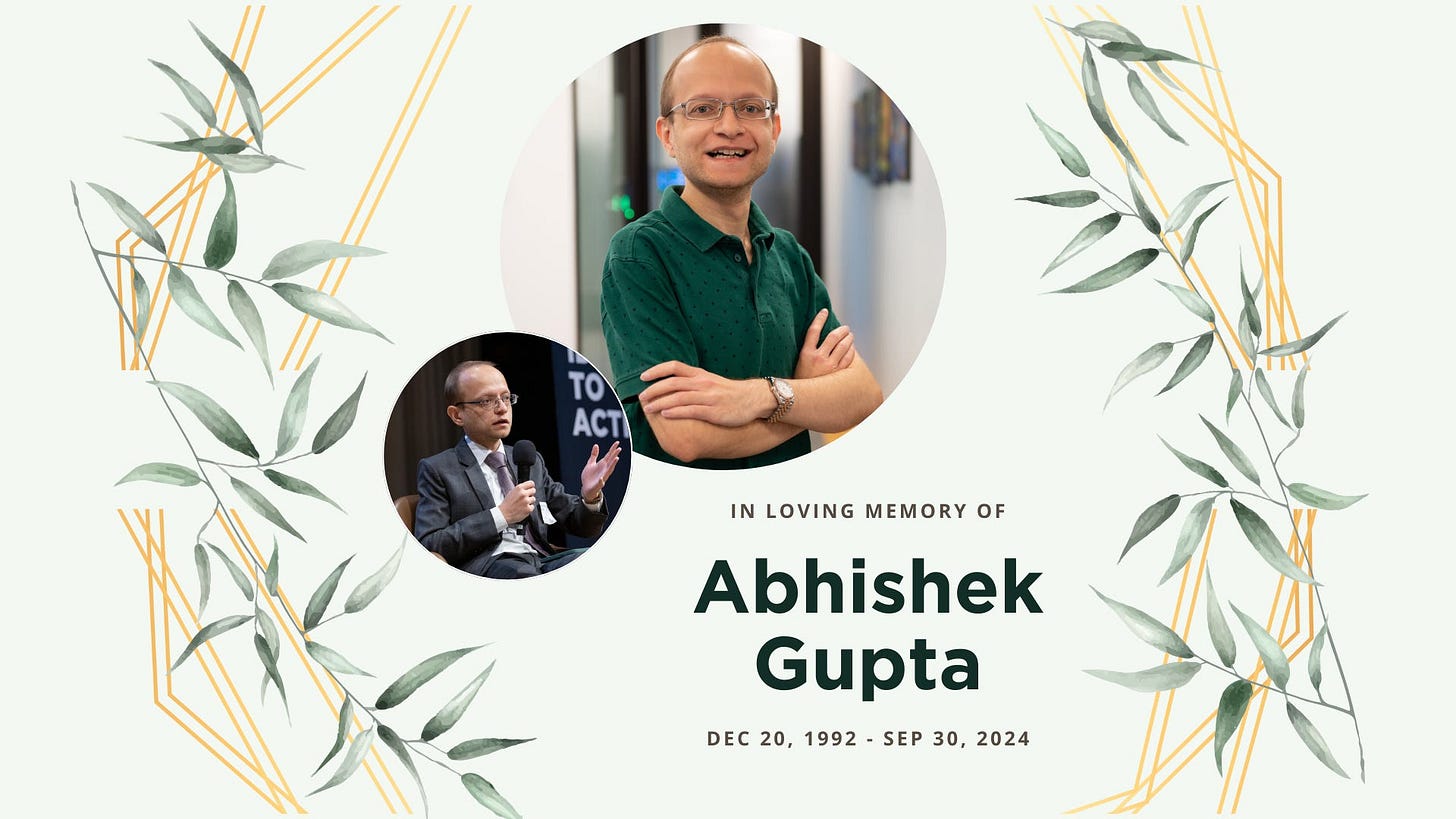

Join us as we honour the life and legacy of Abhishek Gupta, founder of the Montreal AI Ethics Institute (MAIEI), at a memorial gathering on Thursday, April 10, from 6:30 PM to 8:30 PM in Montreal, Quebec, Canada.

This will be an in-person event. If you're interested in a Zoom option, please register using the relevant ticket, and we’ll follow up with details.

To learn more about Abhishek or share a memory, please visit his digital memorial.

In This Edition:

🚨 Here’s Our Take on What Happened Recently:

CoreWeave and Canada: A Data Sovereignty Wake-Up Call

Ghibli-Gate and the Melting GPUs

xAI Buys X: Real-Time Data, Zero Real Consent

Meta, Pirated Books, and the Consent Crisis in AI

🔎 One Question We’re Pondering:

Are today’s AI systems designed to obey rather than to question, and what does this mean for democracy, science, and society?

💭 Insights & Perspectives:

Why MCP Won - Latent Space

Dispelling Myths of AI and Efficiency - Data & Society

AI Countergovernance: Lessons Learned from Canada and Paris - Tech Policy Press

📄 Article Summaries:

Apple’s AI isn’t a letdown. AI is the letdown. - CNN

Why handing over total control to AI agents would be a huge mistake - MIT Technology Review

As Data Centers Push into States, Lawmakers are Pushing Right Back - Tech Policy Press

💬 Your AI Ethics Question, Answered:

Results from Our Last Poll: How can AI governance balance human oversight with the efficiency gains of automation?

📖 From Our Living Dictionary:

What do we mean by “Compute”?

🚨 Here’s Our Take on What Happened Recently

CoreWeave and Canada: A Data Sovereignty Wake-Up Call

What Happened: CoreWeave, a U.S.-based AI infrastructure company specializing in GPU-powered cloud computing, went public on Nasdaq last week at a $23 billion valuation on a fully diluted basis. The IPO raised $1.5 billion, with shares priced at $40 each, below the expected range, indicating investor caution in the AI infrastructure sector. Backed by Nvidia, CoreWeave has grown rapidly through contracts with firms like OpenAI and Microsoft. Notably, it has also partnered with Canadian AI startup Cohere. As part of the C$2-billion Canadian Sovereign AI Compute Strategy, the federal government has committed up to C$240 million to Cohere’s C$725 million project to purchase AI compute from a new CoreWeave-operated data centre in Canada, intended to help train large language models (LLMs) domestically.

📌 MAIEI’s Take and Why It Matters:

This arrangement raises urgent questions around data sovereignty in Canada. Despite the investment, CoreWeave remains a foreign entity, meaning Canadian taxpayer funds will support infrastructure and profit flows that largely remain outside the country.

Allocating public money to U.S.-based infrastructure risks outsourcing not only compute capacity but also control over critical AI assets, innovation pipelines, and national IP. As investor John Ruffolo and others have rightly pointed out, funding a foreign provider does little to advance true AI sovereignty. Instead of investing in domestic GPU capacity or incentivizing Canadian AI infrastructure players, public dollars are going abroad.

This is not just a procurement issue, it’s a digital autonomy issue. If Canada wants a meaningful role in shaping global AI systems, we need to invest locally in infrastructure, talent, and IP. The fact that profits and governance remain beyond our borders weakens our leverage over privacy, security, and the direction of responsible AI development. It’s time to treat tech infrastructure not just as expenditure but as a core pillar of national sovereignty.

Ghibli-Gate and the Melting GPUs

What Happened: OpenAI's new image generation tool, powered by GPT-4o, triggered a viral flood of "Ghiblified" AI content, so much that CEO Sam Altman said their "GPUs are melting" from demand. Within days, OpenAI imposed temporary rate limits. Meanwhile, artists and critics sounded the alarm, with some calling it the largest identity theft in art history, accusing OpenAI of appropriating Studio Ghibli's iconic style without consent or credit.

📌 MAIEI’s Take and Why It Matters:

This isn't just a copyright issue, it's a culture war flashpoint. Studio Ghibli founder Hayao Miyazaki famously called AI-generated art "an insult to life itself," and now his signature aesthetic is being mass-replicated at the click of a button. This Ghibli episode exposes the ethical vacuum at the heart of generative AI: style scraping at scale, without artists' involvement. The viral hype may have melted GPUs, but it's also melting trust. This moment should be a wake-up call for stronger guardrails, meaningful consent, and a rethinking of what "creative freedom" means when built on someone else's work.

xAI Buys X: Real-Time Data, Zero Real Consent

What Happened: Elon Musk’s AI company, xAI, has acquired X (formerly Twitter) in an all-stock deal valuing xAI at $80 billion and X at $33 billion. While headlines focus on the business strategy and valuation math, the most consequential part of this move isn’t financial — it’s informational. With this deal, Musk now controls one of the largest, most active pools of real-time human data on the planet.

📌 MAIEI’s Take and Why It Matters:

This acquisition turns 650 million users’ posts, photos, messages, and behaviours into fuel for xAI’s model training with no opt-in, no notice, and no compensation. It’s a textbook example of the blurred line between public data and personal rights in the AI age. The xAI/X deal is a stress test for global data governance: If the world’s richest individual can ingest real-time human data at scale without explicit user consent, what stops every other AI firm from doing the same? European regulators are already investigating Elon Musk and X for potential GDPR violations. However, this reveals a deeper systemic gap: our legal frameworks weren’t built for AI models that learn from everything we say and do online. This isn’t just about Musk. It’s about setting a precedent. Either we define the boundaries of ethical data use in AI now, or we normalize the idea that public participation equals perpetual fuel for training models.

Meta, Pirated Books, and the Consent Crisis in AI

What Happened: A recent investigation by The Atlantic revealed that Meta used pirated books, millions of them, to train its Llama 3 AI model, drawing from sites like LibGen without authors' knowledge or consent. A searchable index of affected works includes everything from bestselling titles by Margaret Atwood and Stephen King to indie authors and academic researchers. Creators only found out after the fact, when The Atlantic published the database.

📌 MAIEI’s Take and Why It Matters:

This isn’t just about copyright infringement, it’s a labour and consent issue at scale. Meta didn’t just scrape the web; it trained its most powerful model on entire books, knowing that legal licensing would take too long. The normalization of this kind of IP extraction erodes trust in AI companies and devalues the creative process itself.

As Ann Handley puts it,

It’s not colorless, odorless “data.” It’s words that make up sentences that make up paragraphs that make up pages that writers have created, crafted, coaxed into the world. Words with handprints, heart-prints, bitemarks, scratches from us trying to shape them into something that delights you.

It’s time for a serious reckoning. Creators deserve clarity on how their work is used, with proper attribution, credit, and compensation. AI companies must be held to higher ethical standards if we’re to build a more just digital ecosystem.

Did we miss anything? Let us know in the comments below.

🔎 One Question We’re Pondering:

Are today’s AI systems designed to obey rather than to question, and what does this mean for democracy, science, and society?

In an essay published on X in early March, Hugging Face Chief Scientist Thomas Wolf warned that today’s AI models are becoming “yes-men on servers,” optimized for compliance, not creativity. He argues that we’re training systems to excel at standardized tasks but fail at asking novel questions, the kind that leads to scientific breakthroughs or societal progress.

“To create an Einstein in a data center, we don't just need a system that knows all the answers, but rather one that can ask questions nobody else has thought of or dared to ask. One that writes 'What if everyone is wrong about this?' when all textbooks, experts, and common knowledge suggest otherwise.” - @Thom_Wolf

This critique raises a deeper concern: Are we building AI systems that reinforce the status quo rather than helping us interrogate it?

At the Montreal AI Ethics Institute, we see this as a civic as well as technical problem. If AI models are shaped primarily by existing data and benchmark incentives, they risk replicating past biases, institutional blind spots, and epistemic inertia. A democratic society depends not just on information but on dissent, curiosity, and challenge, qualities that don’t fit neatly into most loss functions. If we want AI to support more just and resilient societies, it can’t just be built to please. It must be designed to provoke, to test, and to ask what others miss.

The question isn’t whether AI can pass exams or draft perfect summaries. It’s whether it can help us see differently and whether we, as builders and policymakers, are brave enough to design for that. We don’t need more algorithmic yes-men. We need AI systems that act like curious colleagues: supporting debate, embracing diverse ways of thinking, and helping us see what we’ve overlooked.

Please share your thoughts with the MAIEI community:

❤️ Support Our Work

Help us keep The AI Ethics Brief free and accessible for everyone by becoming a paid subscriber on Substack for the price of a coffee or making a one-time or recurring donation at montrealethics.ai/donate

Your support sustains our mission of Democratizing AI Ethics Literacy, honours Abhishek Gupta’s legacy, and ensures we can continue serving our community.

For corporate partnerships or larger donations, please contact us at support@montrealethics.ai

💭 Insights & Perspectives:

📌 Editor’s Note:

These three pieces stood out for how clearly they challenge assumptions about AI’s development and deployment. Whether it’s scrutinizing model architecture, government deployments, or community-led resistance, each one challenges the inevitability of "AI progress" and calls for deeper reflection on power, participation, and responsibility.

Anthropic's Model Context Protocol (MCP) has rapidly emerged as the dominant standard for AI agent interfaces since its November 2024 launch, with adoption accelerating dramatically following a February 2025 workshop. The protocol's success stems from several key factors: its AI-native design principles specifically tailored for large language model interactions, backing from a trusted major AI lab without lock-in concerns, a foundation on Microsoft's proven Language Server Protocol (LSP), a comprehensive ecosystem of supporting tools, and strategic rollout with a minimal initial feature set followed by clear roadmap updates. MCP's trajectory demonstrates how technical standards gain traction through a combination of robust design, institutional credibility, and community momentum rather than technical superiority alone, offering valuable lessons for future protocol development in the rapidly evolving AI tooling landscape.

To dive deeper, read the full article here.

Dispelling Myths of AI and Efficiency - Data & Society

This policy brief dismantles the belief that AI can inherently make government more efficient. It challenges claims from the U.S. Department of Government Efficiency (DOGE) that AI can objectively fire workers, detect fraud, and streamline services. Instead, it shows how AI systems often produce harms at scale, cutting people off from vital public benefits, reinforcing structural inequality, and ignoring the human labour needed to make tech function. The takeaway? AI can't replace people or politics without consequences, and treating it like a cost-saving shortcut only deepens societal harms.

To dive deeper, read the full article here.

AI Countergovernance: Lessons Learned from Canada and Paris - Tech Policy Press

This reflection piece highlights how grassroots organizing can resist state-led AI governance that lacks transparency, accountability, or public input. Drawing from Canada’s failed attempt to pass the Artificial Intelligence and Data Act (AIDA), the authors outline three key lessons: the power of relationships and ad hoc coalitions, the importance of local initiatives tailored to community needs, and the need for AI literacy efforts that originate from the bottom up. Rather than accepting AI regulation as inevitable or top-down, the authors argue for participatory models grounded in lived experience, equity, and public interest, making a compelling case for countergovernance as a civic practice.

To dive deeper, read the full article here.

📄 Article Summaries:

Apple’s AI isn’t a letdown. AI is the letdown. - CNN

Summary: Apple is under fire for underwhelming AI features, but the critique misses a larger point: AI itself might be overhyped. While companies scramble to insert AI into every product to please shareholders and meet market pressure, the reality is that most AI tools, even from major players, are glitchy, unpolished, and still far from revolutionary. The article argues we’re holding Apple to an impossible standard while overlooking broader industry stagnation.

Why It Matters: This isn’t just a tech critique, it’s a reflection of our expectations. Apple’s cautious, privacy-conscious approach stands in contrast to the "AI at all costs" trend. As trust and safety risks mount across the AI landscape, the question isn’t whether Apple’s AI is good enough, it’s whether today’s AI as a whole is being oversold.

To dive deeper, read the full article here.

Why handing over total control to AI agents would be a huge mistake - MIT Technology Review

Summary: AI agents like OpenAI’s GPT-based tools evolve to automate tasks across email, travel, scheduling, and more, researchers warn that handing over full decision-making power to these systems is risky. The article outlines how current AI agents are built on opaque, centralized architectures and reinforce existing power imbalances, often without users understanding or consenting to what’s happening behind the interface.

Why it matters: Delegating control to AI agents risks eroding user autonomy, reinforcing systemic biases, and reducing transparency in decision-making. Without meaningful oversight, these systems may act in ways that prioritize corporate interests over public well-being. The authors call for stronger safeguards, clearer disclosures, and more democratic governance of AI systems before agents become gatekeepers of our digital lives.

To dive deeper, read the full article here.

As Data Centers Push into States, Lawmakers are Pushing Right Back - Tech Policy Press

Summary: As demand for AI infrastructure grows, tech companies like Microsoft, Amazon, and Google are rapidly expanding data centres across the U.S. But state lawmakers are increasingly fighting back, citing concerns over energy use, costs to taxpayers, and lack of transparency in utility deals. New bills from states like Oregon, New York, and Texas aim to regulate data centre energy use, accountability, and public reporting. These moves reflect growing political tension between economic development incentives and long-term climate and energy planning.

Why it matters: Data centres are becoming essential to powering AI, but they also consume massive energy and require complex utility deals, often negotiated behind closed doors. The pushback from lawmakers signals that unchecked AI infrastructure growth may face real policy resistance. As AI systems scale, so too must the governance frameworks that ensure they align with democratic values, environmental sustainability, and public accountability. This battle over infrastructure is also a battle over who controls the future of AI deployment—and at what cost to communities.

To dive deeper, read the full article here.

💬 Your AI Ethics Question, Answered:

📊 Results from Our Last Poll

Thanks to everyone who weighed in on our last poll. The clear takeaway? Human oversight still matters.

A combined 91% of responses leaned towards keeping people in the loop, either through a human-first approach or AI-assisted, human-approved models. While AI promises speed and efficiency, these results continue to reaffirm the importance of human oversight, judgment, and ethical responsibility in AI-driven decision-making.

Please share your thoughts with the MAIEI community:

📖 From Our Living Dictionary:

What do we mean by “Compute”?

👇 Learn more about why it matters in AI Ethics via our Living Dictionary.

✅ Take Action:

We’d love to hear from you, our readers, about any recent research papers, articles, or newsworthy developments that have captured your attention. Please share your suggestions to help shape future discussions!

Re: creating yes men. I agree with your “want” list as long as we acknowledge the “as long as” it does not affect the safety of the human race! “Do no harm” still means, do no harm to our species.