The AI Ethics Brief #185: When AI Goes to War

Verification collapse, procurement politics, and the governance gap widening beneath both.

Welcome to The AI Ethics Brief, a bi-weekly publication by the Montreal AI Ethics Institute. We publish every other Tuesday at 10 AM ET. Follow MAIEI on Bluesky and LinkedIn.

📌 Editor’s Note

In this Edition (TL;DR)

When AI Goes to War: As fabricated conflict footage spreads and AI chatbots fail basic verification, we examine the erosion of the “verification layer” and what it means when AI becomes the environment through which war is interpreted. We also analyze how ethical red lines in defense procurement can redirect rather than restrain capability, and why consumer backlash cannot substitute for binding governance in armed conflict.

India’s AI Impact Summit 2026: Dr. Maya Indira Ganesh from the University of Cambridge reflects on India’s AI Impact Summit, unpacking the tension between national AI ambition, infrastructural realities, and governance in the Global South.

Tech Futures: Our collaboration with RAIN examines the strain AI-generated code is placing on open source ecosystems, and what this means for the resilience of global digital infrastructure.

AI Policy Corner: In partnership with GRAIL at Purdue University, we analyze Ghana’s national AI strategy and the governance challenges of deploying AI for development at scale.

What connects these stories:

Each piece in this edition examines AI as infrastructure operating within larger political and institutional systems. In wartime, it shapes how conflict is interpreted and how capabilities are deployed. In national strategy and global summits, it intersects with questions of development, investment, and power. In open source ecosystems, it tests the durability of the digital foundations on which everything else depends.

Across contexts, technological capability is advancing within governance systems that remain uneven and contested. As AI becomes infrastructure for conflict, the defining questions are institutional: who authorizes its use, what constraints govern it, and how accountability is enforced.

When AI Goes to War, Truth Is the First Casualty

In July 2024, a group of researchers from the Stockholm International Peace Research Institute, the UN Office for Disarmament Affairs, NYU, and the Montreal AI Ethics Institute, including our late co-founder Abhishek Gupta, co-authored a piece in IEEE Spectrum with a straightforward warning: the civilian AI community was badly underestimating what its work was becoming infrastructure for. Not just productivity tools or creative assistants, but the material underpinning of conflict, disinformation, and geopolitical power.

This weekend, that warning arrived.

The United States and Israel carried out joint military strikes on Iran. Within hours, fabricated footage flooded social media. AI-generated clips, repurposed gaming videos, and fake screenshots of news articles circulated widely. Tens of thousands shared them. AI chatbots, when asked to verify the footage, frequently confirmed it as real. The interim host of one of Canada’s flagship political news programs, Ben Mulroney, shared a clip that traced back to a South Korean YouTube gaming channel, footage from a 2013 military-simulation video game called Arma 3.

This is the convergence those researchers were pointing toward. AI is not just a tool being used in war. It has become the environment in which war is understood, or misunderstood, by the public.

The Verification Layer Has Collapsed

We documented an earlier version of this pattern in Brief #168, when AI-generated conflict footage began circulating during prior Israel-Iran escalations last year. The dynamic was already visible: audiences turned to AI chatbots for ground truth, chatbots failed them, and false content acquired a kind of machine-verified legitimacy it had not earned.

A DFRLab report from that conflict showed Grok oscillating between contradictory assessments of the same footage, hallucinating details, and identifying the same AI-generated airport clip as showing damage in Tel Aviv, Beirut, Gaza, or Tehran, depending on who was asking. There is little evidence that this failure mode has been resolved.

The Mulroney episode is useful not as an outrage story but as a diagnostic one. Here is someone occupying a journalist’s seat without the training or institutional accountability of journalism, operating in an information environment with almost no friction. The video looked plausible. It was widely shared. A chatbot might have confirmed it. The institutions that might once have caught it, editorial oversight, platform moderation, and verification culture, were absent or ineffective. The clip remained online. Getting it right, as Global News’ own editorial standards state, is more important than getting it first. That standard did not hold.

Platforms now function as real-time war correspondents for millions, yet their content integrity systems remain calibrated for engagement rather than for geopolitical stability. When fabricated footage circulates globally within minutes and verification lags behind, public perception can harden before facts stabilize, compressing political decision cycles in already volatile contexts.

Beyond the Information Environment: The Governance Problem

What makes this moment more than a media literacy story is what was unfolding simultaneously beyond the frame.

SAIER Vol. 7’s chapter on military AI documents how corporate red lines, while real, are not binding. When Microsoft cut AI services to Israeli Intelligence Unit 8200 over confirmed use of AI-powered kill-list targeting, Unit 8200 began migrating to AWS. The constraint was redirected rather than held.

Anthropic refused to allow Claude to be used for mass domestic surveillance or fully autonomous weapons. The Pentagon designated the company a supply chain risk, a status previously reserved for foreign adversaries, and OpenAI stepped in. While the companies articulated different ethical boundaries, the underlying capability did not disappear. The refusal did not halt deployment so much as reroute it. In defense procurement, a boundary set by one supplier can become an opportunity for another.

In a geopolitical environment defined by strategic competition, capability acquisition is framed as urgency, and ethical hesitation can be recast as liability.

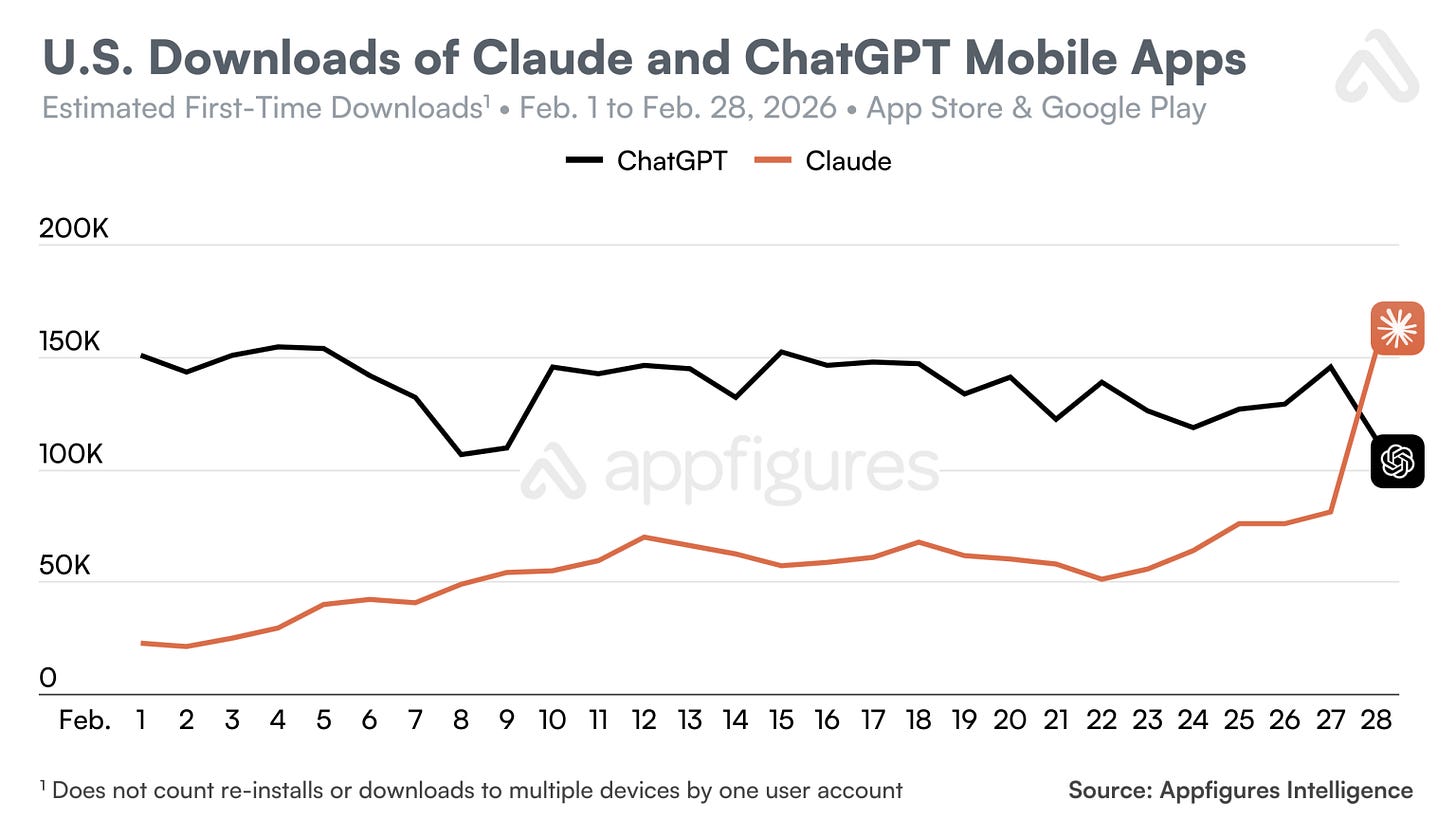

Consumers reacted as well. Following OpenAI’s Department of Defense partnership announcement, U.S. uninstalls of ChatGPT’s mobile app surged by 295 percent day-over-day on Saturday, February 28, according to Sensor Tower. One-star reviews increased sharply. Downloads fell. During the same period, Anthropic’s Claude saw significant increases in downloads and briefly overtook ChatGPT in U.S. daily installs, reaching the top position in several app store rankings.

Alignment choices clearly matter to users. But market reactions do not set procurement policy. App store rankings do not define the legal boundaries of AI deployment in armed conflict. Individuals can attempt to reallocate trust, yet the decisive questions about how AI integrates into national security infrastructure remain concentrated in negotiations between companies and states.

Alistair Croll noted that the episode itself would become training data. Future systems will be trained on a historical record in which a company that refused certain military uses was designated a national security risk, while another that accepted moved forward in partnership. Norms are encoded not only in model weights, but in consequences. The pattern of who is rewarded, penalized, or replaced becomes part of the dataset that future systems learn from.

A Warning Written in 2024

The IEEE Spectrum piece called for something that feels more urgent now than when it was written. AI practitioners needed to understand governance, not just code. They needed to engage with policymakers, not just deploy products. The organizations working on these questions were too small, too homogenous, and too disconnected from the populations most at risk.

That critique has not expired. The question of what AI systems can be used for in war, who they can target, what they can verify, and whose narratives they amplify is currently being shaped through bilateral negotiations between AI companies and defense departments. That is too few actors making decisions with consequences that extend far beyond their immediate contractual partners.

Verification has broken down at multiple levels, from individual users to media institutions to the AI tools people now consult for clarity. Rapid-response verification protocols, traceability standards for AI-generated conflict media, and structured partnerships between platforms and credible journalism organizations are no longer optional safeguards. They are a necessary public infrastructure.

The technology continues to advance regardless of who supplies it. The determination of what AI can and cannot do in armed conflict cannot be left to terms-of-service clauses or procurement agreements. It requires binding international frameworks, the kind civil society organizations such as Stop Killer Robots and Project Ploughshares continue to advocate for, and that binding law has yet to deliver.

Military and informational applications of AI are progressing faster than the norms meant to govern them. That was the gap Abhishek and his colleagues identified in 2024.

It has widened.

Please share your thoughts with the MAIEI community:

💭 Insights & Perspectives:

Dreams and Realities in Modi’s AI Impact Summit

In this op-ed written for the Montreal AI Ethics Institute, Dr. Maya Indira Ganesh of the Leverhulme Centre for the Future of Intelligence (LCFI) at the University of Cambridge examines India’s AI Impact Summit as a microcosm of contemporary AI politics, where narratives of democratisation, innovation, and global leadership collide with infrastructural constraints and sociopolitical fault lines. The piece moves beyond the spectacle of scale to interrogate what large AI summits actually produce: meaningful governance conversations or investment-driven hype. By foregrounding grassroots participation, Global South perspectives on safety, and the material realities of implementation, it questions whether AI ambition can translate into equitable and accountable outcomes.

To dive deeper, read the full article here.

Tech Futures: The Threat of AI-Generated Code to the World’s Digital Infrastructure

This third instalment of Tech Futures, produced in collaboration with the Responsible Artificial Intelligence Network (RAIN), examines how generative AI is reshaping open source ecosystems. The piece explores the rise of low-quality, AI-generated code contributions at scale and the growing burden placed on volunteer maintainers who safeguard critical digital infrastructure. It situates this trend within broader tensions between speed-driven innovation narratives and the slower, care-intensive work of maintenance, arguing that without cultural and structural shifts, the integrity of the world’s digital infrastructure may be at risk.

To dive deeper, read the full article here.

AI Policy Corner: Analysis of Ghana’s Strategy for the Integration of AI

This edition of our AI Policy Corner, produced in partnership with the Governance and Responsible AI Lab (GRAIL) at Purdue University, examines Ghana’s national strategy for integrating Artificial Intelligence into its development agenda. The piece explores how Ghana aims to leverage AI to advance healthcare, energy infrastructure, and economic growth, while confronting structural challenges such as digital access gaps, gender disparities in technical fields, and governance capacity. It highlights the country’s proposed Responsible AI Office and its engagement with international governance frameworks, situating Ghana’s approach within broader debates about equitable AI development in emerging economies.

To dive deeper, read the full article here.

❤️ Support Our Work

Help us keep The AI Ethics Brief free and accessible for everyone by becoming a paid subscriber on Substack or making a donation at montrealethics.ai/donate. Your support sustains our mission of democratizing AI ethics literacy and honours Abhishek Gupta’s legacy.

For corporate partnerships or larger contributions, please contact us at support@montrealethics.ai

✅ Take Action:

Have an article, research paper, or news item we should feature? Leave us a comment below — we’d love to hear from you!

AI isn’t a truth machine.

But people still act on what it produces as if it were.

That’s where the real problem begins.

Not in whether the system knows the truth

but in who remains accountable once its output becomes action.

https://theshieldinitiative.substack.com/p/ai-is-not-a-truth-machine?utm_source=direct&r=97kkm&utm_campaign=post-expanded-share&utm_medium=web

Does anyone know how to actually carry out all the actions called for here? That is, do the theories, techniques, tools, tradecraft and techies actually exist? I believe not. I wrote a letter about this: https://sotaletters.substack.com/p/mathematical-challenges-from-ai-ethics