The AI Ethics Brief #187: The Myth of Inevitability

On manufactured inevitability and the surveillance infrastructure being built in plain sight.

Welcome to The AI Ethics Brief, a bi-weekly publication by the Montreal AI Ethics Institute. We publish every other Tuesday at 10 AM ET. Follow MAIEI on Bluesky and LinkedIn.

📌 Editor’s Note

In this Edition (TL;DR)

The Inevitability Myth. Karen Hao and Timnit Gebru, speaking at SXSW alongside MacArthur Foundation President John Palfrey, named it plainly: the utopian and dystopian AI narratives are two sides of the same coin, engineered to keep power in the same small group of hands. We examine the counter-movements pushing back.

You Are Already Being Watched. Meta’s Ray-Ban glasses are routing intimate footage to overseas contractors. Flock Safety’s 90,000 cameras are performing 20 billion licence plate scans a month. We map the surveillance infrastructure being assembled, one procurement decision at a time.

Tech Futures. Our collaboration with RAIN examines how Big Tech borrows from the fossil fuels industry playbook: softening the language around extraction, and embedding itself in the policy spaces meant to govern it.

AI Policy Corner. In partnership with GRAIL at Purdue University, we look at how Anthropic constructs the boundaries of model behaviour not in one document but across an entire governance stack, and what that reveals about corporate AI governance more broadly.

What Connects These Stories:

All four pieces in this edition ask the same question: who decides what the infrastructure of AI looks like, and who bears the cost?

In Silicon Valley, a small group of founders and investors decided the trajectory was inevitable and democratic deliberation a distraction. Civic communities around the world are deciding otherwise, blocking data centres, cancelling surveillance contracts, and building technology that serves their own people on their own terms. Wearable devices, licence plate networks, and Ring doorbell cameras were each approved, launched, and defended individually. Together they constitute something no single procurement decision had to account for.

Tech Futures and AI Policy Corner extend the inquiry. Tech Futures traces how Big Tech softens the language around extraction and embeds itself in the policy spaces meant to govern it. AI Policy Corner shows how model behaviour is bounded not in one document but across an entire governance stack, each layer shaping what accountability means in practice.

The infrastructure of AI is being built, governed, and contested simultaneously. The people doing the building and the people bearing the consequences are rarely the same.

The Inevitability Myth

Every CEO in Silicon Valley needs you to believe one thing: that AI is inevitable. Not useful. Not necessary. Inevitable, as in resistance is futile, adaptation is the only rational response, and anyone questioning the direction of travel is naive or obstructionist.

This is not analysis. It is a business strategy.

The zero-human company is where this narrative has been heading all along. What Sam Altman floated as a thought experiment in early 2024, a unicorn run by one person and AI agents, has since become a serious planning horizon. The discourse has moved from “could AI run a billion-dollar company?” to “who will be first?” Software engineering, the profession most immediately exposed to this shift, is watching from the inside as economic incentives push toward a future where productivity gains accrue to a vanishingly small number of owners and costs are distributed broadly and unevenly.

It was precisely this trajectory that Karen Hao and Timnit Gebru were pushing back against at SXSW this month, joining John Palfrey, President of the John D. and Catherine T. MacArthur Foundation, for a conversation on reclaiming our humanity in the age of AI. The exchange was clarifying in ways the usual discourse rarely is.

For Hao, the utopian and dystopian AI narratives are two sides of the same coin, both engineered by the same small group of people in Silicon Valley to reach the same conclusion: that only they should control this technology. Build the machine god or risk the machine devil, but either way, leave it to us. The panacea and the doomsday scenario are not opposing views. They are coordinated myth-making, designed to foreclose democratic deliberation before it begins.

Gebru, founder of the Distributed AI Research Institute, put it plainly: the field moved toward AGI not because of scientific consensus, but because of ideology, something closer to a secular religion, where true believers justify extraordinary resource extraction and labour exploitation in the name of building either heaven or preventing hell. When she was at Google, the question was never “what problem are we solving?” It was “why aren’t we building the biggest model?”

That foreclosure of democratic deliberation is not hypothetical. In Canada, an investigation by Linda Solomon Wood at Canada's National Observer, documented in SAIER Vol. 7, revealed that a group called KICLEI was using an AI chatbot to flood municipal councils with climate misinformation, generating letters that appeared to come from legitimate environmental organizations while promoting anti-climate messaging. Councillors in multiple provinces were quoting the same AI-generated talking points verbatim, without knowing it. The tool built to detect this, Civic Searchlight, now offers a glimpse of what AI-enabled democratic manipulation looks like at scale, and what it takes to counter it.

But here is what the inevitability narrative most needs to suppress: the existence of choice.

People around the world are already making different ones. When Hollywood writers went on strike in 2023, they refused to cede creative work to AI systems, and they won. When residents in Ennis, Ireland filed legal challenges to block a four-billion-euro data centre planned for local farmland, and activists in Santiago, Chile successfully pushed Google to withdraw plans that would have depleted precious aquifers, they did so having concluded that the infrastructure of AI development was not compatible with their own communities. Masakhane, a grassroots research collective, has spent years building natural language processing tools for African languages, by Africans, rejecting the premise that one model trained on one set of values should serve everyone. Te Hiku Media in New Zealand built their own Māori language models and refused to license the data to U.S. firm Lionbridge, stating plainly: “They suppressed our languages and physically beat it out of our grandparents, and now they want to sell our language back to us as a service.”

None of these required waiting for permission from anyone building a machine god.

The We and AI collective recently published a framework, “Resisting, Refusing, Reclaiming, Reimagining AI,” that maps exactly these kinds of responses. Resisting means rejecting the ideological scaffolding that makes AI expansion appear natural. Refusing means drawing specific red lines around tools and systems that cause harm. Reclaiming means wresting governance back from the engineers and investors who have captured it. Reimagining means building alternative visions of technology designed around care, collective wellbeing, and planetary health rather than extraction and scale. The framework matters because it names something the inevitability narrative actively suppresses: there are many ways to push back, and none of them require you to be against technology.

What is actually inevitable, if the current trajectory holds, is a narrowing. Of who benefits, of who governs, of what questions are considered worth asking. That is not a law of nature. It is a set of choices, made by specific people, for specific reasons, that can be contested by everyone else.

The future of AI is not decided. The inevitability narrative needs you to forget that.

You Are Already Being Watched

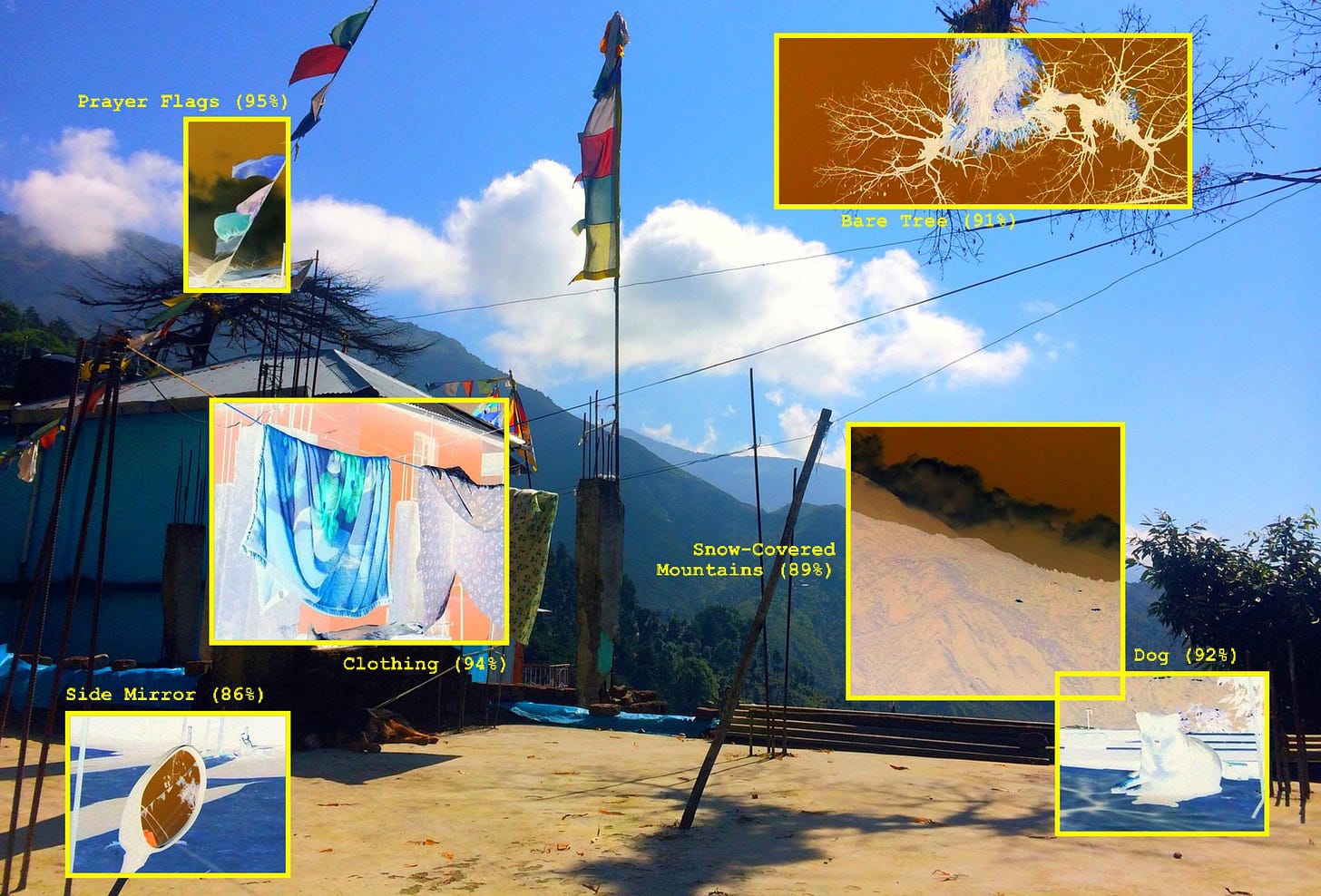

In late February, a Swedish investigation found that data annotators working for Sama, a Nairobi-based subcontractor for Meta, had been reviewing footage captured by Ray-Ban Meta smart glasses, including videos of people undressing, sexual acts, and bank card numbers recorded without the subjects’ knowledge.

One worker described the content directly:

“We see everything – from living rooms to naked bodies. Meta has that type of content in its databases. People can record themselves in the wrong way and not even know what they are recording. They are real people like you and me.”

A federal class action lawsuit followed within days, alleging Meta had misrepresented how footage from the glasses was handled. Meta had marketed the glasses with a straightforward promise: “designed for privacy, controlled by you.”

The glasses are designed to look indistinguishable from regular eyewear. The only indicator of active recording is a small LED light on the right frame, a light that hobbyists are selling modification kits to disable for around $60. When New York Times reporter Brian Chen wore the glasses in public, shooting 200 photos and videos on trains, hiking trails, and in parks, nobody noticed. Why would they? It is rude to stare at a stranger’s glasses. The social norms that allow us to coexist in shared spaces were not designed for ambient, invisible surveillance.

They were also not designed for a world where two Harvard students could pair the same glasses with a facial recognition service and people-search sites to instantly surface a stranger’s home address, phone number, and family members, in real time, just by looking at them. In April 2025, Meta updated its privacy policy to remove the option for users to prevent voice recordings from being stored, making always-on AI features the default.

The glasses are one piece of a much larger picture. Flock Safety, a private company founded in 2017 in Atlanta, Georgia, operates what it describes as the largest public-private safety network. As of mid-2025, that network comprised between 80,000-90,000 cameras across 49 states, performing over 20 billion licence plate scans every month, with contracts covering more than 5,000 communities and 4,800 law enforcement agencies. Every vehicle that passes a Flock camera is photographed, its plate logged with a timestamp and location, fed into a searchable national database.

The EFF obtained records representing over 12 million searches by more than 3,900 agencies between late 2024 and late 2025, and the patterns were unambiguous: the system had been used to track protesters at political demonstrations, including the 50501 protests in February 2025, the Hands Off protests in April 2025, and the No Kings protests in June and October 2025. In Washington state, a University of Washington study found that eight law enforcement agencies had shared their Flock networks directly with US Border Patrol, while border patrol had accessed the data of at least ten other departments without those departments' permission.

At least 30 municipalities have cancelled or deactivated their Flock contracts since the start of 2025, many citing fears that local surveillance data was feeding a federal deportation infrastructure. Flock's CEO described the opposition as coming from “the same activist groups who want to defund the police, weaken public safety, and normalize lawlessness.” The police chief of Staunton, Virginia wrote back to disagree. “These citizens have been exercising their rights to receive answers from me, my staff, and city officials, to include our elected leaders," he said. "In short, it is democracy in action.”

That democracy has had consequences. In February 2026, Amazon cancelled a planned integration between its Ring doorbell cameras and Flock Safety's network, following intense public backlash over fears that neighbourhood surveillance footage would feed directly into federal immigration enforcement. Ring had aired a Super Bowl ad showing dozens of cameras scanning a neighbourhood street. The ad was meant to promote a lost-dog feature. The public saw something else.

Taken separately, each of these stories has a familiar shape: a privacy scandal, a police technology controversy. Taken together, they describe something more coherent: a surveillance infrastructure being assembled through consumer products and law enforcement contracts, without any public deliberation about whether this is the world we want to live in.

The data flowing through these systems is not neutral. It is used to track movement, infer intent, and extract value from individual behaviour. Wearable devices capture intimate footage routed to overseas contractors. Licence plate networks are queried to monitor protesters and identify immigrants.

None of this required a single authoritarian decree. It emerged from thousands of procurement decisions, product launches, and privacy policy updates, each individually defensible, collectively constituting a surveillance state. It just was not called that when it was being built.

Please share your thoughts with the MAIEI community:

💭 Insights & Perspectives:

Tech Futures: The Fossil Fuels Playbook for Big Tech: Part II

This article is part of our Tech Futures series, a collaboration between the Montreal AI Ethics Institute (MAIEI) and the Responsible Artificial Intelligence Network (RAIN). In this special instalment of Tech Futures by RAIN, we describe two ways in which the Big Tech industry draws on the playbook of the fossil fuels industry.

To dive deeper, read the full article here.

AI Policy Corner: Layered Governance in AI Labs: Defining Boundaries Across the Policy Stack

This edition of our AI Policy Corner, produced in partnership with the Governance and Responsible AI Lab (GRAIL) at Purdue University, uses Anthropic’s Claude Constitution, Responsible Scaling Policy, and Claude Sonnet 4.6 System Card as a representative example to look at how layered corporate AI policy documents come together to define and shape the boundaries of model behavior across a governance stack.

To dive deeper, read the full article here.

❤️ Support Our Work

Help us keep The AI Ethics Brief free and accessible for everyone by becoming a paid subscriber on Substack or making a donation at montrealethics.ai/donate. Your support sustains our mission of democratizing AI ethics literacy and honours Abhishek Gupta’s legacy.

For corporate partnerships or larger contributions, please contact us at support@montrealethics.ai

✅ Take Action:

Have an article, research paper, or news item we should feature? Leave us a comment below — we’d love to hear from you!

The inevitability framing gets used to close off exactly the conversations we need to be having. Treating AI development as a force of nature rather than a series of choices made by specific people is how accountability disappears. Looking forward to reading this one fully.

Great insights! Thanks for sharing :)