AI Ethics Brief #72: Value-sensitive design, technologists not in control, privacy in the brain, and more ...

How mature are you with your AI Ethics initiatives?

Welcome to another edition of the Montreal AI Ethics Institute’s weekly AI Ethics Brief that will help you keep up with the fast-changing world of AI Ethics! Every week we summarize the best of AI Ethics research and reporting, along with some commentary. More about us at montrealethics.ai/about.

⏰ This week’s Brief is a ~14-minute read.

Support our work through Substack

💖 To keep our content free for everyone, we ask those who can, to support us: become a paying subscriber for as little as $5/month. If you’d prefer to make a one-time donation, visit our donation page. We use this Wikipedia-style tipping model to support the democratization of AI ethics literacy and to ensure we can continue to serve our community.

*NOTE: When you hit the subscribe button, you may end up on the Substack page where if you’re not already signed into Substack, you’ll be asked to enter your email address again. Please do so and you’ll be directed to the page where you can purchase the subscription.

This week’s overview:

✍️ What we’re thinking:

The Technologists are Not in Control: What the Internet Experience Can Teach us about AI Ethics and Responsibility

🔬 Research summaries:

AI Ethics Maturity Model

Mapping value sensitive design onto AI for social good principles

📰 Article summaries:

Privacy in the Brain: The Ethics of Neurotechnology

Six Essential Elements Of A Responsible AI Model

An Artificial Intelligence Helped Write This Play. It May Contain Racism

Facebook Apologizes After A.I. Puts ‘Primates’ Label on Video of Black Men

But first, our call-to-action this week:

Preserving the Ecosystem: AI, Data and Algorithms

The Montreal AI Ethics Institute is partnering with AI Policy Labs for a discussion on AI and the environment.

The discussion will span across how AI is being leveraged for a greener future. With the computational power required, such technology has the possibility to harm the environment, while also holding the key to innovation. Discussions surrounding this paradox through an environmental lens will be the mainstay of this meetup.

📅 September 9th (Thursday)

🕛 Noon –1:30PM EST

✍️ What we’re thinking:

Permission to be uncertain:

Interview with David Clark, Senior Research Scientist, MIT Computer Science & Artificial Intelligence Lab

Clark has been involved with the development of the Internet since the 1970s. He served as Chief Protocol Architect and chaired the Internet Activities Board throughout most of the 80s, and more recently worked on several NSF-sponsored projects on next generation Internet architecture. In his 2019 book, Designing an Internet, Clark looks at how multiple technical, economic, and social requirements shaped and continue to shape the character of the Internet.

In discussing his lifelong work, Clark makes an arresting statement: “The technologists are not in control of the future of technology.” In this interview, I explore the significance of those words and how they can inform today’s discussions on AI ethics and responsibility.

To delve deeper, read the full article here.

🔬 Research summaries:

With ethical AI certainly being a hot topic in the business world, how can this be achieved? The Ethical AI Practice Maturity Model sets out 4-steps towards achieving the end goal of an “end-to-end-ethics-by-design” model. With that in sight, the need for company-wide participation and the passion for building ethical AI are a must.

To delve deeper, read the full summary here.

Mapping value sensitive design onto AI for social good principles

Value sensitive design (VSD) is a method for shaping technology in accordance with our values. In this paper, the authors argue that, when applied to AI, VSD faces some specific challenges (connected to machine learning, in particular). To address these challenges, they propose modifying VSD, integrating it with a set of AI-specific principles, and ensuring that the unintended uses and consequences of AI technologies are monitored and addressed.

To delve deeper, read the full summary here.

📰 Article summaries:

Privacy in the Brain: The Ethics of Neurotechnology

What happened: The article points out the emerging regulatory challenges faced in the domain of neurotechnology as the quantified wellness industry has taken off with many mass-market devices like the Muse headband claiming that they are able to tap into brain signals to deliver better neurotech-enabled experiences to enhance productivity and improve meditation practices. What was previously the domain of the DIY community is now going mainstream and one of the interviewees in the article explicates that without undergoing the same stringent standards governed by the FDA as we have for other medical devices, we risk causing irreversible harm for those who use these novel devices in an experimental fashion.

Why it matters: The demonstration from Musk’s Neuralink certainly brought neurotechnology to the attention of many more people than before. Pitched as being able to augment the brain and our ways of communicating with each other mediated by machines, in addition to the more immediate (and perhaps realistic) benefits of helping those vision and speech problems, the ethical concerns of commercial technologies without medical-grade approvals is very unnerving. The privacy implications, especially in the era of continual cyberattacks is another exacerbating factor.

Between the lines: While physical damage like skin burns from wrong usage of transcranial direct current stimulation (tDCS) devices can be measured to a certain extent, there is potential for damage that is hidden or alters the subjective experience of a person that can’t be quantified. In such a case, understanding the burden of liability is tricky to resolve, especially with incomplete information and this has some bearing on a lot of scenarios we encounter in the domain of AI ethics as well.

Six Essential Elements Of A Responsible AI Model

What happened: The article presents a simple model with 6 items to think about in Responsible AI: accountable, impartial, transparent, resilient, secure, and governed. This is a combination and perhaps rehash of many existing frameworks, guidelines, and sets of principles already out there. The author admits to as much. What is interesting in the article is the list of questions that are provided when thinking about whether or not to have an AI ethics board and those are the biggest takeaways from the article. In particular, splitting up who should be held accountable when something goes wrong and who is responsible for making changes to address undesirable outcomes is something that is important.

Why it matters: A lot of frameworks in Responsible AI can end up being overly complicated or overly simplistic. This model perhaps has the right level of granularity but more than that what is useful is that it provides a great starting point for someone who is early in their Responsible AI journey to get started with a core set of priorities.

Between the lines: What we really need next is a comprehensive evaluation of the effectiveness of this framework and all the other frameworks that are out there in terms of meeting the stated goals of actually achieving responsible AI in practice at their organization. Unless that happens, we can’t meaningfully pick one framework over another because all evaluations as of yet would be theoretical in nature.

An Artificial Intelligence Helped Write This Play. It May Contain Racism

What happened: Human-machine teaming is always an interesting domain to surface unexpected results. In a play staged in the YoungVic in London, playwrights have joined forces with GPT-3 to generate a script on the spot which is enacted by a troupe of actors without any rehearsals giving unique plays every night that they are on stage. Taking on an uncensored and unfiltered approach, the harsh stage lighting of the YoungVic will also lay bare the biases that pervade the outputs of GPT-3 mirroring the realities of the world outside the stage. Jennifer Tang, the director, sees this as an exciting foray into the future of what AI can do for the creative field.

Why it matters: While we have seen a lot of debates around the role that AI will play in the creative fields, something that we’ve covered in Volume 5 of the State of AI Ethics Report as well, using a very powerful model like the GPT-3 to work side-by-side with humans is novel in generating creative outputs. While scholars interviewed in the article caution against attributing creativity to the outputs from the system, it might be worth considering if we can say that such a tool helps to boost creativity for artists by expanding the solution space that the artists can then explore.

Between the lines: Biases in the outputs from GPT-3 are very problematic - with stereotypical dialogue allocation based on the religion of the actors to outright homophobia and racism, the issues with such large-scale models are numerous. How such problems are tackled and if they can be brought to the stage where they become trusted tools in the creative process remains to be seen. The first step in that process is highlighting the problems and beginning to build tools that can address those issues before this becomes a common practice in the creative industries.

Facebook Apologizes After A.I. Puts ‘Primates’ Label on Video of Black Men

What happened: Facebook provides automated recommendations for videos and other content on its platforms as users consume content. In a particularly egregious error, the platform showed a message prompting the user if they wanted to see more “keep seeing videos about Primates” when the video that they were watching was in fact a video of a few Black men having an altercation with some White people and police officers. The video had nothing to do with primates whatsoever. The spokespersons for the company said that they are doing a root cause analysis to see what might have gone wrong.

Why it matters: The use of an AI system trained without guardrails, especially when there are known issues of bias and racism due to the outputs from the system shows that there isn’t yet enough being done to mitigate these issues that can instantly impact millions of people due to their scale and pace. Facebook as a platform has the largest repository of user-uploaded content and it uses that to train its AI systems. But, this recent incident demonstrates that there is much more to be done before this becomes something that we can safely deploy, if we ever get there.

Between the lines: Given the large number of problems that automated recommendation systems have today, it is a bit surprising that these are still used in deployment. What would be interesting to analyze is the extent to which these companies are willing to overlook such incidents in the interest of the gains that they get from keeping people engaged on the platform when the recommendations do work. Because the external research community and the public have no visibility on this tradeoff, it is increasingly difficult to hold such organizations accountable for such errors and to recommend corrective actions when it’s not entirely clear how the system is being built and operated and what the internal costs and benefits analysis looks like.

From our Living Dictionary:

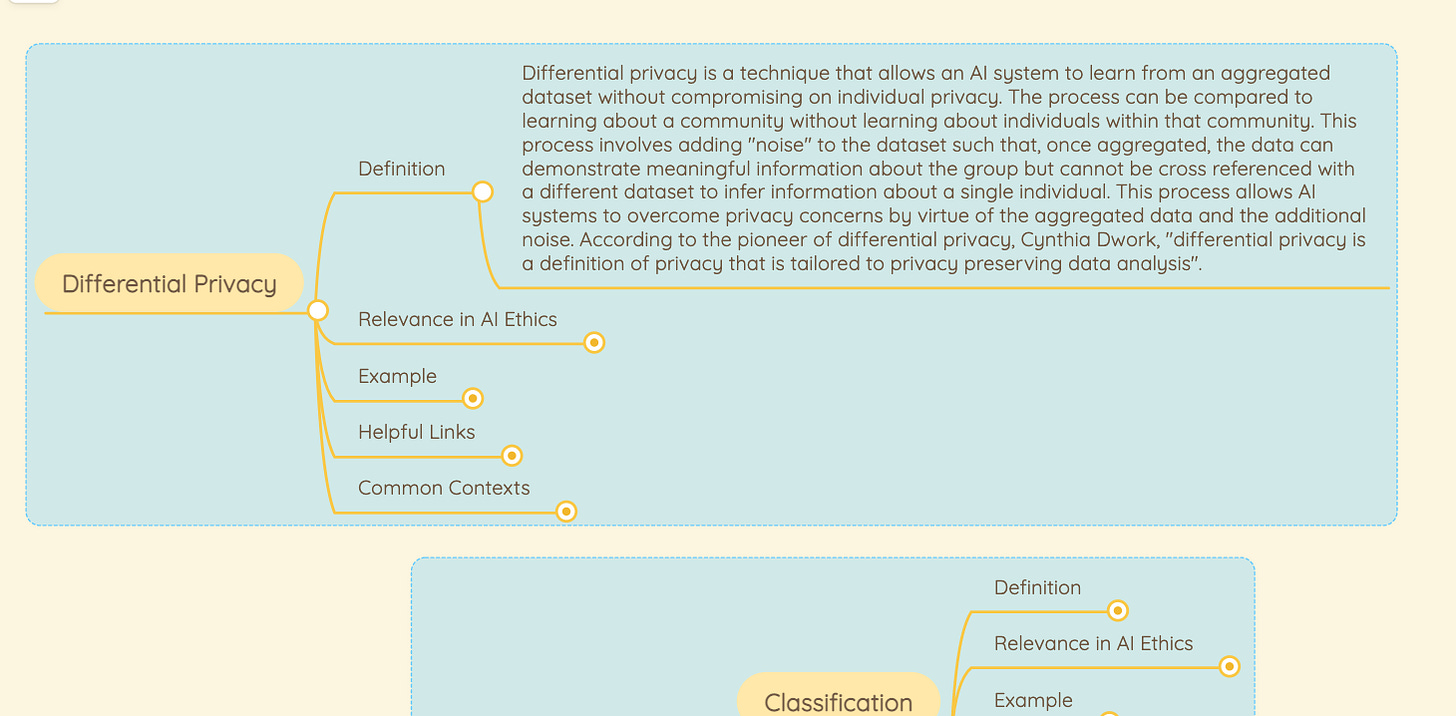

‘Differential Privacy’

👇 Learn more about why it matters in AI Ethics via our Living Dictionary.

In case you missed it:

Warning Signs: The Future of Privacy and Security in an Age of Machine Learning

Machine learning (ML) is proving one of the most novel discoveries of our time, and it’s precisely this novelty that raises most of the issues we see within the field. This white paper serves to demonstrate how although not for certain, the warning signs appearing in the ML arena point toward a potentially problematic privacy and data security future. The lack of established practices and universal standards means that solutions to the warning signs presented are not as straightforward as with traditional security systems. Nonetheless, the paper demonstrates that there are solutions available, but only if the road of inter-disciplinary communication and a proactive mindset is followed.

To delve deeper, read the full summary here.

Take Action:

Preserving the Ecosystem: AI, Data and Algorithms

The Montreal AI Ethics Institute is partnering with AI Policy Labs for a discussion on AI and the environment.

The discussion will span across how AI is being leveraged for a greener future. With the computational power required, such technology has the possibility to harm the environment, while also holding the key to innovation. Discussions surrounding this paradox through an environmental lens will be the mainstay of this meetup.

📅 September 9th (Thursday)

🕛 Noon –1:30PM EST