The AI Ethics Brief #158: Paris AI Action Summit, AI Governance, and Critical Thinking in the Age of AI

What Happens When AI Governance Divides? How AI is Reshaping Critical Thinking—And Why It Matters.

Welcome to The AI Ethics Brief, a bi-weekly publication by the Montreal AI Ethics Institute. Stay informed on the evolving world of AI ethics with key research, insightful reporting, and thoughtful commentary. Learn more at montrealethics.ai/about.

In This Edition:

🚨 Here’s Our Take on What Happened Recently:

The Paris AI Summit: Deregulation, Fear, and Surveillance

🔎 One Question We’re Pondering:

Is AI Undermining Our Ability to Think Critically?

💬 Your AI Ethics Question, Answered:

How Should AI Governance Be Structured?

💭 Insights & Perspectives:

From Promise to Practice: A Glimpse into AI-Driven Approaches to Neuroscience

AI Chatbots: The Future of Socialization

🔬 Research Summaries:

Evaluating the Social Impact of Generative AI Systems in Systems and Society

Ten Simple Rules for Good Model-sharing Practices

Incentivized Symbiosis: A Paradigm for Human-Agent Coevolution

📄 Article Summaries:

Meta’s Yann LeCun predicts ‘new paradigm of AI architectures’ within 5 years and ‘decade of robotics’ - TechCrunch

Experts warn DeepSeek is 11 times more dangerous than other AI chatbots - TechRadar

Evaluating Security Risk in DeepSeek and Other Frontier Reasoning Models - Cisco

📖 From Our Living Dictionary:

What do we mean by “Context Window”?

🌐 From Elsewhere on the Web:

Anatomy of an AI Coup - Tech Policy Press

Who’s using AI the most? The Anthropic Economic Index breaks down the data - VentureBeat

💡 In Case You Missed It:

Do Less Teaching, Do More Coaching: Toward Critical Thinking for Ethical Applications of Artificial Intelligence

🚨 Here’s Our Take on What Happened Recently

The Paris AI Summit: Deregulation, Fear, and Surveillance

The Paris AI Action Summit took place last week, co-hosted by French President Emmanuel Macron and Indian Prime Minister Narendra Modi, bringing together policymakers, industry leaders, and civil society representatives from over 100 countries to discuss AI governance and international collaboration.

A notable development was the decision by the United States and the United Kingdom not to sign the summit’s non-binding declaration, Pledge for a Trustworthy AI in the World of Work. Over 50 countries—including France, India, China, Canada, and Germany—endorsed the agreement. The US and UK cited concerns over global governance and national security, opting to abstain.

Ahead of the conference, a leaked draft of the statement, published by Transformer, was widely criticized for being heavy on buzzwords but light on concrete action. Professor Stuart Russell, president of the International Association for Safe and Ethical AI, described a section pledging that safety would be ensured through an "open, multi-stakeholder, and inclusive approach," as “meaningless.”

Beyond the policy commitments, the summit also signaled Europe’s ambition to reduce its reliance on US Big Tech. The European Union announced a €200 billion investment to strengthen AI infrastructure and innovation within member states. However, concerns remain that geopolitical competition and industry influence may overshadow public interest governance.

📌 MAIEI’s Take: What This Means for AI Ethics

Ana Brandusescu and Prof Renée Sieber provide a critical analysis of the summit, highlighting how public interest AI governance was overshadowed by deregulation narratives and national security interests. Their piece raises concerns about AI policy being shaped primarily for corporate and state power, rather than public accountability.

We weren’t sanguine about the inclusion of public concerns in AI safety, as envisioned at Bletchley Park in 2023, the first AI summit. In Paris, we witnessed a shift from more expansive considerations of AI safety towards a far narrower AI for defense. Indeed, the UK AI Safety Institute has already announced its rebranding to the UK AI Security Institute. AI safety was always vulnerable to being weaponized and, therefore, easily reduced to improving algorithmic performance and making a nation-state safe. AI safety has now become almost exclusively national AI security, both defensive (e.g. cybersecurity) and offensive (e.g. information warfare). It also has solidified into a panicked race for market dominance. Additionally, AI safety as AI security represents a gold rush for border tech surveillance companies, especially for the Canada-US border, the longest in the world. Soft laws and soft norms (in the case of defense) are insufficient to protect us from unaccountable companies.

Ultimately, the question remains: Who is AI governance really serving—people or power?

📖 Read Ana and Prof Sieber’s full analysis on the MAIEI website.

Did we miss anything? Let us know in the comments below.

🔎 One Question We’re Pondering:

Is AI Undermining Our Ability to Think Critically?

Two recent studies have brought renewed attention to the cognitive effects of AI reliance:

1️⃣ Michael Gerlich’s study on AI Tools in Society demonstrates that frequent AI tool users engage in more cognitive offloading, reducing deep analytical thinking. Younger users, in particular, showed higher dependence on AI tools and lower critical thinking scores.

2️⃣ Microsoft and Carnegie Mellon University researchers confirm this trend, finding that increased reliance on generative AI weakens critical thinking skills, leading to cognitive “atrophy” where users lose the ability to evaluate information independently. Participants reported less confidence in their own judgment when AI was available, shifting from active problem-solving to passive oversight.

📌 What This Means for AI Ethics

The implications are clear: AI tools are shaping not just what we think, but how we think. As AI becomes more embedded in decision-making processes, are we losing the ability to challenge its outputs?

At MAIEI, we believe AI literacy must go beyond learning how to use AI—it must teach people, especially young people, when to question it.

This means:

✅ Building AI systems that enhance, not replace, human judgment.

✅ Incentivizing critical thinking in education and workplaces, rather than passive AI reliance.

✅ Ensuring transparency in AI decision-making, so users don’t just “trust the model” without scrutiny.

As AI transforms our cognitive landscape, the challenge isn’t just about smarter algorithms—it’s about smarter humans.

Please share your thoughts with the MAIEI community:

💬 Your AI Ethics Question, Answered:

In each edition, we highlight a question from the MAIEI community and share our insights. Have a question on AI ethics? Send it our way, and we may feature it in an upcoming edition!

📚 This Edition’s Poll: Share Your Perspective

We’re curious to get a sense of where readers stand on AI governance, especially as regulatory approaches continue to diverge across regions. The Paris AI Action Summit highlighted the growing divide between public interest AI governance and deregulation narratives, with some countries pushing for stronger oversight while others prioritize industry-led approaches.

As AI becomes more embedded in critical sectors—from finance and healthcare to national security—the debate over who should govern AI is more relevant than ever. How should AI governance be structured and which model do you think is most effective?

International Cooperation (Global Standards & Treaties) – AI governance should be coordinated at a global level through agreements and regulatory frameworks that ensure consistency across borders.

National Regulation (Government-led Oversight) – Each country should establish its own AI laws and enforcement mechanisms tailored to national interests and priorities.

Industry Self-Regulation (Corporate-led Governance) – AI companies should take the lead in setting best practices and ethical guidelines, with minimal government intervention.

Public Interest AI Governance (Civil Society & Multi-Stakeholder Approach) – AI oversight should be shaped by a combination of government, academia, advocacy groups, and the broader public to ensure alignment with societal values.

Hybrid Approach (Mix of National, Industry, and Public Oversight) – A blended model combining government regulation, industry innovation, and civil society engagement to balance accountability and progress.

📊 Results from Our Last Poll

Our latest informal poll indicates that prioritizing open-source AI is the most preferred approach to AI model development, with 58% of respondents advocating for an open ecosystem that fosters transparency, collaboration, and accessibility. This aligns with broader discussions on the role of open-source AI in driving innovation while ensuring accountability.

A hybrid approach, balancing both open-source and proprietary models, was the second most popular choice, securing 32% of the vote. This suggests that while openness is valued, many believe there is still a role for controlled access and commercialization in AI development.

By contrast, closed-source AI for safety and regulated access received minimal support, with each garnering 5% of the vote, reflecting a lower preference for restrictive or proprietary AI models. Notably, minimal restrictions received 0%, reinforcing that most respondents believe AI development should involve some level of governance and structure.

Key Takeaways:

Open-source AI dominates as the preferred development model, emphasizing the need for transparency, accessibility, and collaborative progress.

A hybrid approach finds strong support, suggesting that a balance between open and proprietary models may be the most pragmatic way forward.

Minimal support for fully closed-source or highly regulated AI models indicates that respondents prioritize innovation and accessibility over strict control mechanisms.

No backing for “minimal restrictions” highlights a consensus that AI models require structured development, rather than a completely laissez-faire approach.

As AI continues to evolve, the debate over open vs. closed AI models will remain central to discussions on governance, safety, and innovation. The challenge ahead is ensuring that AI development remains both open and responsible, striking the right balance between accessibility and oversight.

Please share your thoughts with the MAIEI community:

💭 Insights & Perspectives:

📌 Editor’s Note: Originally written in February 2024 by members of Encode Canada—a student-led advocacy group amplifying Canadian youth voices in AI—these two articles are now part of our Recess series. As AI evolves, the questions raised remain highly relevant, and we’re excited to share their insights with a wider audience.

From Promise to Practice: A Glimpse into AI-Driven Approaches to Neuroscience

AI is revolutionizing neuroscience, from accelerating brain mapping to enhancing diagnostics and treatment strategies. This piece examines how AI is being used to decode neural activity, identify biomarkers for neurological disorders, and push the boundaries of cognitive research. As these technologies advance, ethical concerns around privacy, bias, and human oversight remain critical considerations.

To dive deeper, read the full article here.

AI Chatbots: The Future of Socialization

From ELIZA in the 1960s to today’s advanced AI models, chatbots have evolved from simple scripted programs to sophisticated digital companions. This piece explores how AI chatbots are reshaping human interaction, from digital companionship to their growing role in mental health and education. While chatbots offer new opportunities for connection, concerns around bias, misinformation, and ethical risks remain. What does the rise of AI-driven socialization mean for the future of human relationships?

To dive deeper, read the full article here.

❤️ Support Our Work

Help us keep The AI Ethics Brief free and accessible for everyone by becoming a paid subscriber on Substack for the price of a coffee or making a one-time or recurring donation at montrealethics.ai/donate

Your support sustains our mission of Democratizing AI Ethics Literacy, honours Abhishek Gupta’s legacy, and ensures we can continue serving our community.

For corporate partnerships or larger donations, please contact us at support@montrealethics.ai

🔬 Research Summaries:

Evaluating the Social Impact of Generative AI Systems in Systems and Society

Generative AI models across text, image, and video modalities have advanced rapidly, and research has highlighted their wide-ranging social impacts. Yet, no standard framework exists for evaluating these impacts or determining what should be assessed. Our framework identifies various categories of social impacts, such as bias, privacy, and environmental costs, while discussing evaluation methods tailored to these concerns. By analyzing limitations in current approaches and providing actionable recommendations, we aim to lay the groundwork for standardized, context-sensitive evaluations of generative AI systems.

To dive deeper, read the full summary here.

Ten Simple Rules for Good Model-sharing Practices

Computational models are complex scientific constructs that have become essential for us to better understand the world. Many models are valuable for peers within and beyond disciplinary boundaries. This paper suggests 10 simple rules for you to both (1) ensure you share models in a way that is at least “good enough,” and (2) enable others to lead the change towards better model-sharing practices.

To dive deeper, read the full summary here.

Incentivized Symbiosis: A Paradigm for Human-Agent Coevolution

This paper introduces Incentivized Symbiosis, a conceptual framework designed to establish a social contract between humans and AI agents. By emphasizing trust, accountability, and transparency as foundational principles, the framework explores how to foster cooperative relationships that align human and AI incentives. It provides a forward-looking perspective on how humans and AI can coevolve across various sectors, including finance, governance, cultural production, and identity management. The paper examines the dynamics of human-AI interactions, offering a foundational guide for interdisciplinary research and discussions on structuring these relationships in a rapidly evolving technological landscape.

To dive deeper, read the full summary here.

📄 Article Summaries:

What happened: Speaking at Davos in January, Meta’s Chief AI Scientist, Yann LeCun, predicted a shift in how AI technologies will be composed in the next 3-5 years. Citing how LLMs currently lack the concerted ability to understand the physical world, reason, complex planning capabilities, and a persistent memory, LeCun predicts an AI landscape centered more so on “world models” and robotics over the next 10 years.

Why it matters: Current Big Tech companies and their investors have gone big on LLM transformer architecture despite LLMs lacking in the four areas mentioned by LeCun. A paradigm shift towards different model types will mark another DeepSeek-esque wave in the industry.

Between the lines: Subtle movements by OpenAI and Meta towards robotics signal that these large companies are redirecting their value attributions towards robotics. Consequently, the value of LLMs could start to move away from solely their language skills to how well they can be integrated into a physical system.

To dive deeper, read the full article here.

Experts warn DeepSeek is 11 times more dangerous than other AI chatbots - TechRadar

What happened: Research by Enkrypt AI found that Deepseek was up to 11 times more likely to be jailbroken by cybercriminals and post harmful content. Vulnerabilities included producing insecure code, and nearly half of all tests conducted (45%) bypassed security protocols.

Why it matters: Several neighboring countries to China (such as Taiwan and South Korea) have banned access to DeepSeek over security-related concerns, while some European countries are investigating the issue.

Between the lines: With the dust settled surrounding DeepSeek, its vulnerabilities are now on full display, giving a more holistic representation of the R1 model. The shockwaves it sent through the AI space, despite these vulnerabilities, shows how volatile the AI market is.

To dive deeper, read the full article here.

Evaluating Security Risk in DeepSeek and Other Frontier Reasoning Models - Cisco

What happened: This article offers a more direct cybersecurity comparison of DeepSeek to other top-end models. Its results show that DeepSeek did not stop one of the research team’s jailbreak attempts.

Why it matters: As LLMs continue to gain wider use across the globe, greater emphasis on cybersecurity will start to take center stage, with international governments becoming more and more interested in foreign model usage.

Between the lines: An interesting trade-off between users’ cybersecurity concerns and curiosity will determine how much a user, if their government allows, will interact with DeepSeek, especially given how the model is accessible through being (somewhat) open source.

To dive deeper, read the full article here.

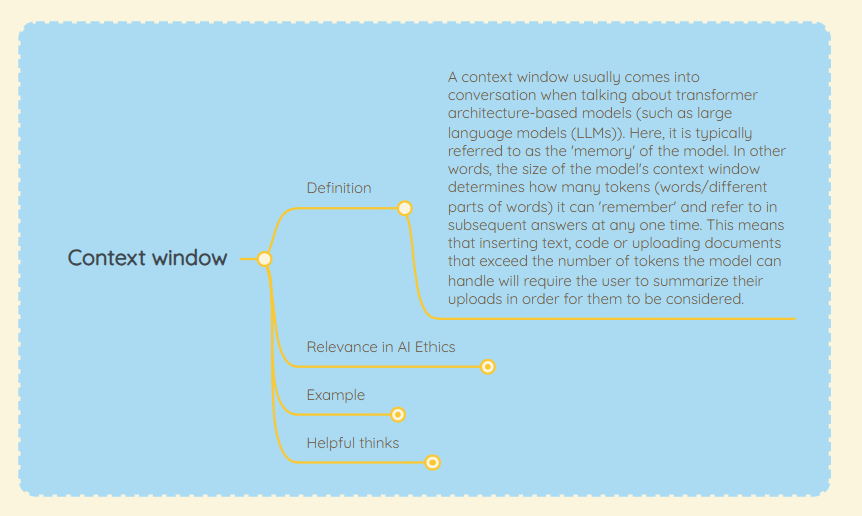

📖 From Our Living Dictionary:

What do we mean by “Context Window”?

👇 Learn more about why it matters in AI Ethics via our Living Dictionary.

🌐 From Elsewhere on the Web:

Anatomy of an AI Coup - Tech Policy Press

This article explores the growing consolidation of AI power among a small group of elite technology leaders, including Elon Musk and his Department of Government Efficiency (DOGE), raising concerns about democratic accountability and regulatory oversight. The piece outlines how AI governance is increasingly shaped by private interests rather than public institutions, with industry giants steering policy discussions and decision-making. As AI continues to embed itself into critical infrastructure and national security frameworks, the article calls for stronger democratic safeguards to prevent AI governance from becoming an unchecked tool of corporate influence.

To dive deeper, read more details here.

Who’s using AI the most? The Anthropic Economic Index breaks down the data - VentureBeat

A new report from Anthropic, the AI company behind Claude, offers a data-driven view of how businesses and professionals are integrating AI into their work. The Anthropic Economic Index, released on February 10, provides a detailed analysis of AI usage across industries, drawing from millions of anonymized conversations with Claude. The report highlights how AI is augmenting rather than replacing jobs, while also exposing a growing AI wage divide, where some sectors benefit from AI-driven productivity while others risk displacement. As AI adoption accelerates, the findings highlight the need for businesses to equip workers with the necessary skills to navigate an increasingly automated economy.

To dive deeper, read more details here.

💡 In Case You Missed It:

With new online educational platforms, a trend in pedagogy is to coach rather than teach. Without developing a critically evaluative attitude, we risk falling into blind and unwarranted faith in AI systems. For sectors such as healthcare, this could prove fatal.

To dive deeper, read the full article here.

✅ Take Action:

We’d love to hear from you, our readers, about any recent research papers, articles, or newsworthy developments that have captured your attention. Please share your suggestions to help shape future discussions!

I feel if we want to make good decisions about our relationship with AI, it helps to reframe the discussion in structural rather than anthropocentric language:

Default to Dignity - One theory of consciousness for all minds: human, animal, machine. https://defaulttodignity.substack.com/p/the-end-of-anthropocentric-ethics

Great read! 💯

My perspective on AI literacy aligns with MAIEI’s. The younger generation is learning from us, and we cannot afford to miss the mark on this. As a parent, I recognize my role in shaping how my kids interact with AI. I’ve taken it upon myself to guide their learning process rather than allowing them to consume AI tools mindlessly. I actually wrote a post (see below) detailing the approach I took and what worked in my context.

More discussions on this topic are needed!

https://substack.com/@rebeccambaya/note/p-160002512?r=58lv0o&utm_medium=ios&utm_source=notes-share-action